Make vs. Buy: The Case for Professional Software in the Age of AI

Spencer Penn

AI has made the first week of building software look much cheaper than it really is.

That is the trap.

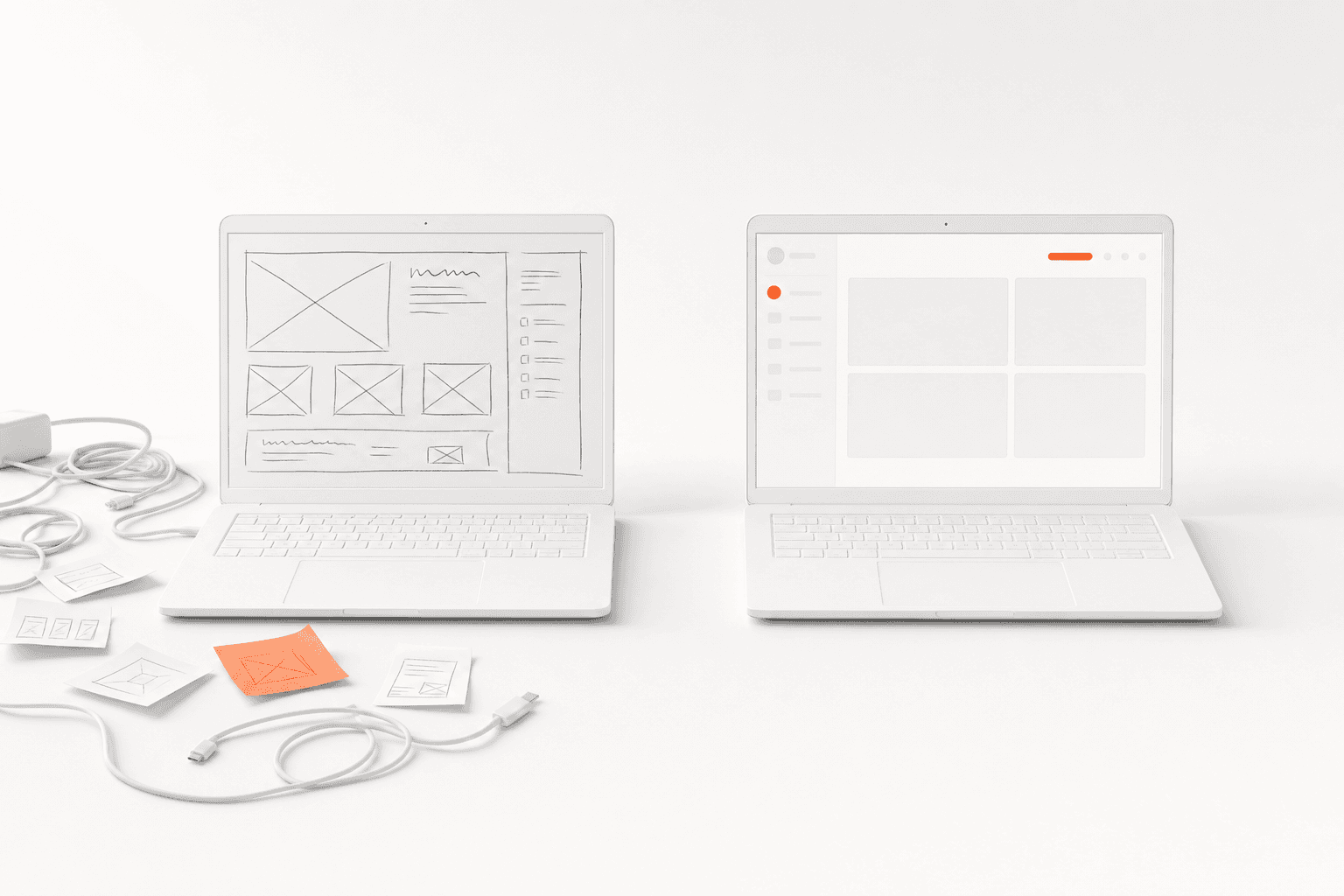

A buyer, engineer, or IT team can now sit down with Cursor, Replit, Lovable, Claude, or GitHub Copilot and get a working prototype in a few days. The app has login. It has a dashboard. It connects to a spreadsheet. It summarizes supplier quotes. It can probably generate a chart that looks good in the Monday staff meeting.

Then someone asks the natural question: why are we paying a vendor six figures for this?

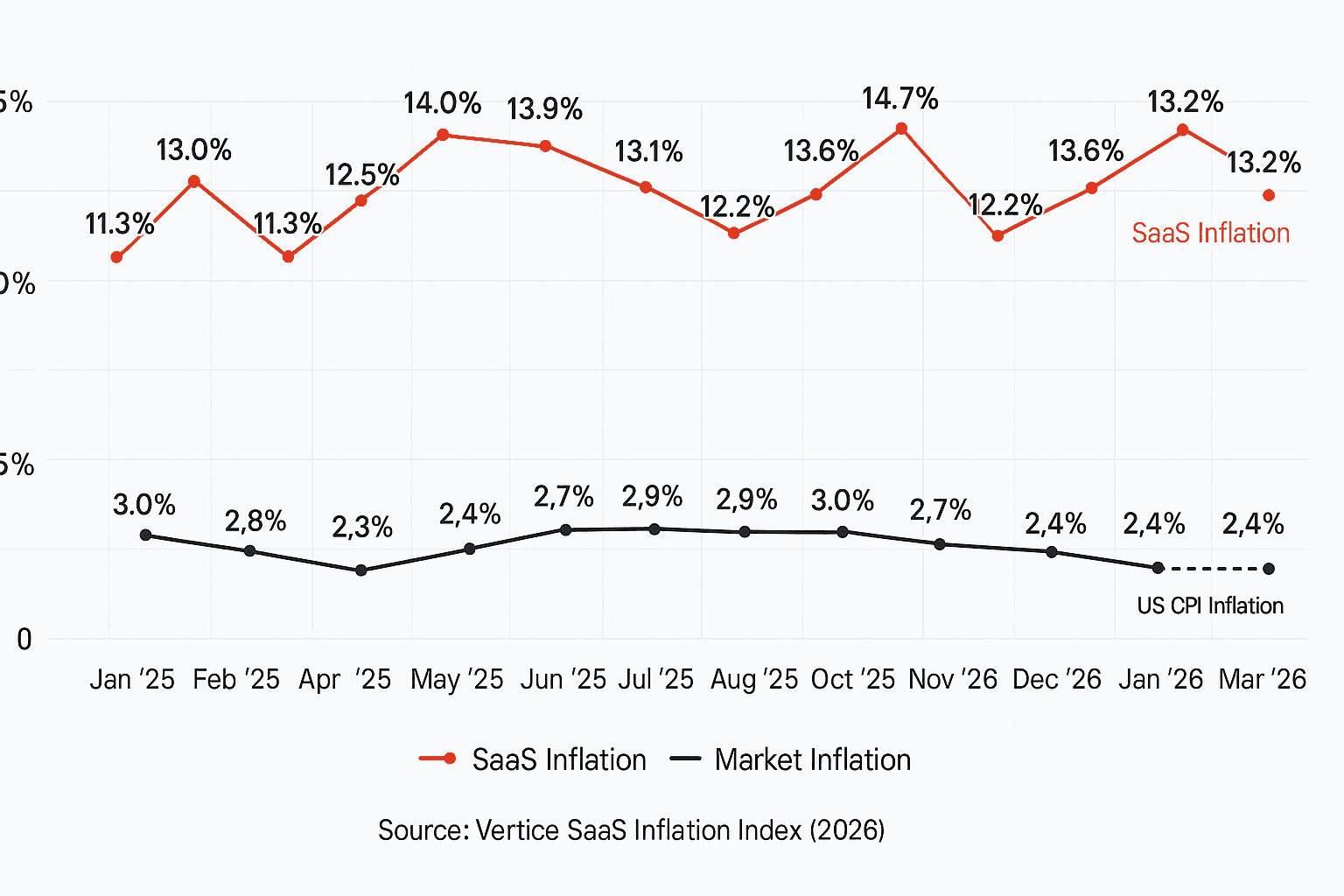

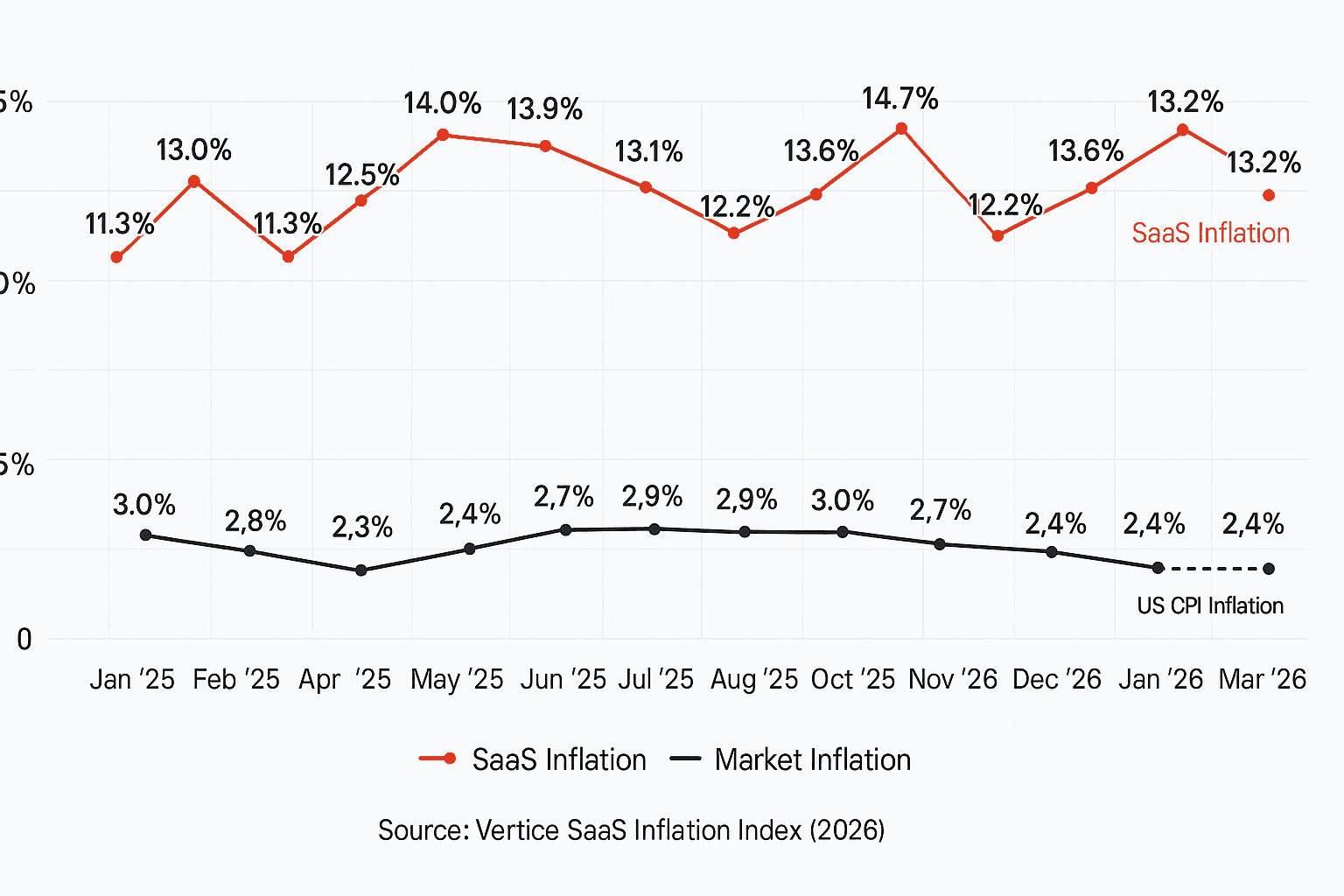

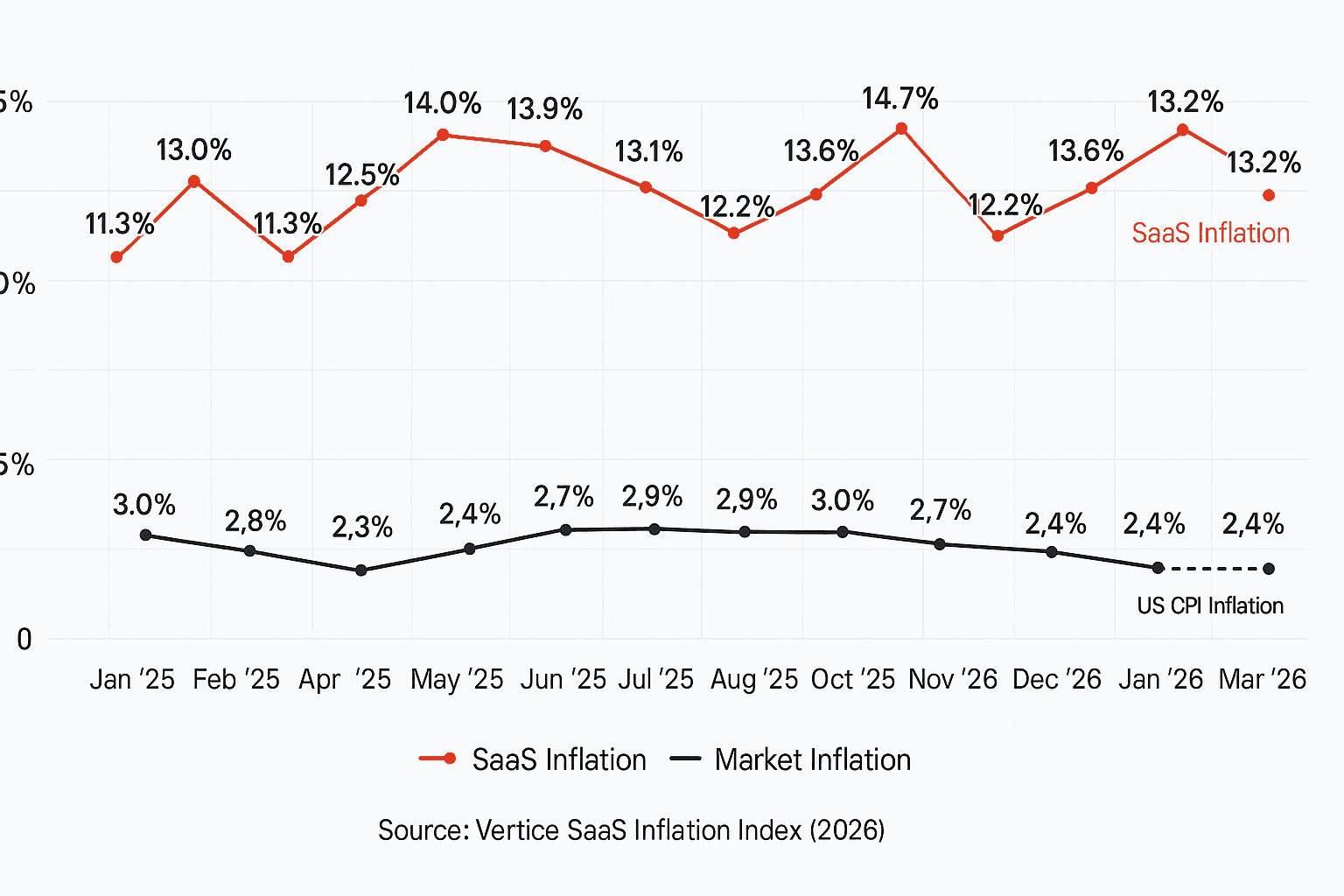

It is a fair question. SaaS costs have gone up. Vertice estimated that SaaS spend reached $9,100 per employee in 2025, up from $7,900 in 2023. SaaStr has argued that a large share of recent SaaS revenue growth is coming from price increases rather than new customers. Every CFO has seen the same pattern: a tool starts cheap, becomes embedded in the workflow, then the renewal comes back 18% higher.

So yes, the instinct to build is rational.

But the make-vs-buy question has changed in a way that is easy to miss. AI has lowered the cost of making a prototype. It has not lowered the cost of owning a production system. In some cases, it has raised it, because more code is being created by people who do not have a clear plan for maintaining it.

The question is not “can we build this?”

The question is “do we want to be responsible for this software three years from now?”

The prototype is not the product

Most internal software projects look good at the beginning because the first demo captures only the happy path.

Let’s say a procurement team wants a tool to compare supplier quotes. The team has an Excel template. They ask IT for help. Someone builds a small app that lets suppliers upload quotes, normalizes line items, and flags the lowest cost by part number.

Pretty simple, right?

Now the real work starts.

One supplier quotes in kilograms and another in pounds. One includes freight and another excludes it. One supplier uses a parent-child part structure. One quote comes in with a minimum order quantity that breaks the unit economics. One supplier adds a tooling amortization charge over 18 months. One file is in German. One supplier submits a revised quote after the deadline, but the buyer wants to keep both versions because the audit trail matters.

The first version of the app handled the demo. The production version has to handle reality.

This is where professional software earns its keep. Not because vendors are magically smarter than internal teams. They are not. Professional software is valuable because the vendor has already lived through more edge cases than one company will see on its own.

A bug found by one customer becomes a fix for many customers. A security review done for one large customer improves the product for the next one. A workflow that breaks during one implementation gets hardened before the next deployment.

That shared learning curve is easy to undervalue when you are looking at a prototype. It is obvious when you are on call for the system.

AI made code cheaper. It did not make maintenance cheaper.

The strongest argument for building is that AI changes the labor curve.

A small internal team can now write more code than it could two years ago. A business analyst can sketch a workflow. A software engineer can scaffold an application quickly. A procurement ops person can generate scripts to clean messy supplier data. This is real.

But more code is not the same as more software.

The evidence so far is not comforting. Veracode reported in its 2025 State of Software Security work that 45% of AI-generated code samples failed security tests. Trend Micro researchers found 69 vulnerabilities across 15 vibe-coded test applications. Other studies have found AI-generated code with materially higher vulnerability rates than human-written code, more duplication, and more debugging work than expected.

That last point matters. The expensive part of internal software is usually not writing version one. It is debugging version nine, when the original author has moved teams, the business process has changed, and nobody remembers why the database has three different supplier ID fields.

Gartner estimates that maintenance consumes 55-80% of IT budgets. Software maintenance accounts for 50-80% of total cost of ownership.

Gartner has long estimated that maintenance consumes the majority of IT budgets, often cited in the 55% to 80% range. Software maintenance is commonly estimated at 50% to 80% of total ownership cost. Those numbers sound high until you look at the backlog of any large IT organization: migrations, security patches, permission issues, broken integrations, performance problems, audit requests, and “small” workflow changes that require a schema change.

AI helps with some of that. It can explain code. It can write tests. It can suggest fixes.

It also creates a new failure mode: code that looks plausible enough to ship before anyone understands it.

That is why developer trust in AI coding tools has become more complicated. Stack Overflow’s 2025 Developer Survey found that trust in AI-generated output remains low, with only 29% of developers reporting that they trust AI tools. Developers are not rejecting AI. They are learning where the boundary is.

The boundary is production ownership.

When building is the right answer

The case for buying professional software is not the same as saying every company should outsource every tool.

Some software should be built.

If the software is the product, build it. If the workflow is a core operating advantage, build it. If no vendor can understand the physics, constraints, or timing of the problem well enough, build it.

Back at Waymo, simulation was not a generic analytics tool. It was central to how the company evaluated autonomous driving behavior. You could not buy an off-the-shelf simulator that captured the specific agent behavior, sensor stack, scenario generation, and evaluation loops the team needed. The software was part of the company’s technical advantage.

At Tesla during Model 3 NPI, there were many cases where internal tooling made sense because the product, factory, and supply base were changing at the same time. A general-purpose tool often could not keep up with the rate of iteration. When the process itself is being invented, buying can force you into the wrong operating model.

That is the strongest counterargument to the buy case: vendors tend to encode yesterday’s common process. If you are doing something genuinely different, professional software can slow you down.

But that argument is narrower than people think.

Most companies are not building software because the workflow is uniquely strategic. They are building because the vendor quote felt high, the implementation looked annoying, or someone on the team got excited after a good AI-assisted demo.

Those are not bad reasons to investigate building. They are bad reasons to commit to owning a system indefinitely.

A useful test is this: if the software works perfectly, does it become part of how you beat competitors?

If yes, consider building.

If no, be careful. You may be signing up to maintain plumbing.

Buying has real downsides

The lazy version of the buy argument ignores the pain of buying software.

SaaS inflation is real. Vendor lock-in is real. Bad implementations are real. Some software gets sold by people who have never done the job the product claims to support. Some tools require so much configuration that the customer effectively becomes the systems integrator. Some vendors make data export painful because they know customers will leave if switching is easy.

There is also a more serious risk: buying software can create false distance from accountability.

The UK Post Office Horizon scandal is the extreme case. Horizon was an accounting and point-of-sale system built by Fujitsu and used by the Post Office. Software errors contributed to shortfalls that were blamed on subpostmasters. Hundreds of people were prosecuted, many lives were damaged, and the public inquiry has spent years unpacking what went wrong.

That case is not a normal SaaS implementation. It is much more severe. But it makes one point clearly: buying software does not remove responsibility for how the system behaves. If a company uses software to make decisions about money, people, quality, or compliance, the company still owns the consequences.

So the right make-vs-buy frame is not “vendors good, internal teams bad.”

It is more specific.

Buy when the vendor’s learning curve, security posture, support model, and product depth are better than what you can reasonably build and maintain. Build when the system encodes a capability that is central to your company’s advantage and cannot be purchased without giving up that advantage.

Everything else requires discipline.

The hidden question is who gets paged

Internal tools often fail because nobody wants to be the product manager after launch.

During the build phase, the project has energy. There is a Slack channel. The VP cares. IT has assigned engineers. The business team is responsive because they want the tool.

Six months later, the original sponsor has moved on. The engineer who wrote most of the code is working on a higher-priority project. The business team wants four changes because the workflow evolved. A supplier cannot log in. The API token expired. The dashboard numbers do not match finance.

Who owns it?

This question sounds operational, but it is the make-vs-buy question in plain English.

With a vendor, you still need an internal owner. You need someone who understands the workflow, manages configuration, pushes for adoption, handles vendor governance, and checks whether the product is doing what was promised.

But you do not need to own every incident. You do not need to patch every dependency. You do not need to staff weekend coverage for a tool that is important but not central to your engineering roadmap.

With internal software, you do.

This is where the cost model often lies. The build estimate includes two engineers for twelve weeks. It rarely includes support rotations, documentation, access reviews, penetration testing, data retention policies, disaster recovery, roadmap planning, onboarding materials, and the cost of business users losing trust when the system breaks twice in one month.

If the system touches suppliers, customers, payments, quality, or regulated records, that missing work is not optional.

A real failure mode: Knight Capital

Knight Capital is one of the clearest examples of software ownership going wrong.

On August 1, 2012, Knight deployed code related to its trading systems. A dormant function was accidentally activated on some servers. In roughly 45 minutes, the firm sent millions of erroneous orders into the market and lost about $440 million. The SEC later described failures in deployment controls, testing, and supervisory procedures.

This was not a vibe-coded procurement app. Knight was a sophisticated financial firm. That is exactly why the example matters.

The lesson is not that internal software is bad. Trading firms often should build trading systems. The lesson is that production software is an operating commitment. Deployment discipline, monitoring, rollback plans, change management, and ownership structures matter as much as the code.

AI does not remove those requirements.

If anything, AI can make them easier to ignore because the first version arrives faster.

A better make-vs-buy test

Most make-vs-buy discussions start with feature fit and license cost. Those matter, but they are not enough.

I would ask seven questions.

1. Is this workflow strategic or just specific?

Every company has weird workflows. Specific does not mean strategic.

A procurement approval matrix with unusual thresholds may feel unique. It probably is not a source of competitive advantage. A simulation environment for autonomous driving behavior might be.

The more strategic the workflow, the stronger the build case.

2. Will the requirements change because the company is learning?

If the process is still being discovered, building may be better. Early NPI work often falls into this bucket. The team is learning what matters, what data exists, and how decisions should be made.

If the process is mature and the company mainly needs reliability, auditability, and adoption, buying usually looks better.

3. Do we have the team to maintain it after the exciting part?

Do not ask whether the team can build it. Ask whether the team can maintain it when nobody is excited anymore.

That includes engineering, product management, security, support, documentation, and user training.

4. Does the vendor see more edge cases than we do?

This is one of the best reasons to buy.

A procurement software vendor working with hundreds of manufacturers will see more supplier quote formats, ERP integration patterns, commodity structures, approval workflows, and sourcing events than one manufacturer will see alone.

That does not make the vendor right about every workflow. It means the product should contain lessons the customer does not have to learn the hard way.

5. What happens to the data?

Owning the software and owning the data are different things.

A company can buy software and still negotiate data rights, export access, retention rules, and restrictions on model training. A company can also build internal software and end up with worse data because every field was created in a hurry and nobody maintained the schema.

The right question is not “where does the code live?” It is “can we trust, access, govern, and reuse the data?”

6. What is the cost of being wrong?

If an internal lunch-ordering app breaks, people get annoyed. If a supplier quote comparison tool mishandles currency conversion or freight terms, the company can award business incorrectly. If a quality system loses traceability, the cost can be much higher.

Higher consequence workflows favor professional software unless the company has a clear reason to build and the operating maturity to support it.

7. Can we leave?

This question applies to both paths.

If you buy, can you export your data and move to another vendor? If you build, can the company keep the system alive if two key engineers leave?

Lock-in is not only a vendor problem. Internal software can lock a company into tribal knowledge.

Procurement is a good example because the edge cases are the product

Direct materials procurement looks simple from far away.

Run an RFQ. Compare bids. Pick a supplier. Track savings.

The real work is messier. Buyers need to compare quotes across currencies, units of measure, incoterms, tooling assumptions, freight terms, capacity constraints, lead times, payment terms, and engineering changes. They need to coordinate with engineering, quality, finance, and suppliers. They need to preserve enough history to explain why a decision was made months later.

This is the type of workflow where AI-assisted internal tools can create a convincing demo quickly. Upload three supplier quotes and summarize the differences. Great.

But the value is in the next hundred details: quote normalization, version control, supplier communication, approval routing, should-cost context, ERP handoff, audit history, and the ability to handle the strange cases that show up during a real sourcing event.

LightSource works on this problem for manufacturers buying direct materials. The product helps teams manage sourcing events, compare supplier quotes, track cost drivers, and keep supplier communication tied to the parts and programs it belongs to. For challenger manufacturers trying to move quickly through NPI, the goal is not to add another system for its own sake. It is to reduce the amount of learning that happens through email threads, spreadsheets, and late surprises.

That is where buying can make sense. Not because procurement teams cannot build tools. Many can. The question is whether they want their scarce engineering and IT capacity spent rebuilding the same production details that a focused vendor has already had to solve.

The better answer is not “make” or “buy”

The best companies will do both.

They will buy professional software for common but consequential workflows where reliability, support, security, and accumulated edge cases matter. They will build where the workflow is genuinely strategic or too new for the market to serve well. They will use AI aggressively for prototypes, internal automation, data cleanup, testing, documentation, and glue code.

But they will not confuse a prototype with a product.

That distinction matters more in the age of AI, not less. When building software becomes easier, the number of internal tools will grow. Some will be useful. Some will become quiet liabilities. The companies that do well will have a sharper governance muscle around which tools deserve production ownership.

A good make-vs-buy decision should feel less like a technology preference and more like an operating decision.

Who owns the roadmap?

Who fixes the bug?

Who answers the audit question?

Who gets paged?

If those answers are not clear, the software is not cheap. It is just early.

Sources

Vertice SaaS Inflation Index -- SaaS pricing research and benchmarks on rising software spend per employee.

SaaStr: SaaS growth and price increases -- SaaStr analysis and commentary on SaaS revenue growth, pricing, and renewal pressure.

Veracode State of Software Security -- Research on software security, including findings related to AI-generated code and application risk.

Trend Micro research on vibe coding risks -- Security research on vulnerabilities found in AI-assisted and vibe-coded applications.

Stack Overflow Developer Survey 2025 -- Developer survey data on AI tool usage, trust, and sentiment.

Gartner IT budget and maintenance research -- Gartner research and advisory work on IT spending, technical debt, and maintenance burden.

SEC administrative proceeding on Knight Capital Americas LLC -- SEC order describing Knight Capital’s 2012 trading incident, controls failures, and financial loss.

UK Post Office Horizon IT Inquiry -- Public inquiry materials on the Horizon system, Fujitsu, the Post Office, and the impact on subpostmasters.

Frequently Asked Questions

What does make vs. buy mean in software?

Make vs. buy is the decision to build software internally or purchase it from an external vendor. In the age of AI, the build option looks easier because teams can create prototypes quickly, but the full decision still has to include maintenance, security, support, data governance, and long-term ownership.

How has AI changed the make-vs-buy decision?

AI has reduced the cost and time required to create a working software prototype. It has not removed the need for production engineering, testing, monitoring, security reviews, documentation, user support, and roadmap ownership. That makes the distinction between prototype and production more important.

When should a company build software instead of buying it?

A company should build when the software directly supports a strategic advantage, when no vendor can meet the requirements, or when the workflow is still being discovered. Examples include proprietary simulation systems, product-specific engineering tools, or operating systems tied closely to how the company competes.

When should a company buy professional software?

A company should buy professional software when the workflow is common enough that a vendor has deeper experience, broader edge-case coverage, and a better support model than the company can justify internally. This is especially true for consequential workflows involving suppliers, financial decisions, audit trails, quality records, or regulated data.

What is the biggest hidden cost of building internal software?

The biggest hidden cost is long-term ownership. Internal software needs bug fixes, security patches, documentation, access control, testing, integrations, user support, and a product roadmap. The initial build may be cheap, but the maintenance burden often lasts for years.

How do you measure the total cost of ownership for internally built software?

Total cost of ownership includes the initial build, ongoing engineering for bug fixes and feature additions, security patching, infrastructure, documentation, user support, and the opportunity cost of engineers who could be working on the product. A useful rule of thumb is that the initial build is roughly 20-30% of the lifetime cost over five years; the remainder is maintenance, support, and modernization as adjacent systems change. Most teams underestimate the maintenance share.

Do AI coding assistants change the make-vs-buy calculation by lowering the build cost?

They lower the prototype cost, not the production cost. AI assistants accelerate the writing of the first working version, but the production-readiness work -- testing, security, monitoring, edge-case handling, and long-term ownership -- is largely unchanged. If anything, they make it easier to confuse a working prototype with a production system, which is the more common mistake.

AI has made the first week of building software look much cheaper than it really is.

That is the trap.

A buyer, engineer, or IT team can now sit down with Cursor, Replit, Lovable, Claude, or GitHub Copilot and get a working prototype in a few days. The app has login. It has a dashboard. It connects to a spreadsheet. It summarizes supplier quotes. It can probably generate a chart that looks good in the Monday staff meeting.

Then someone asks the natural question: why are we paying a vendor six figures for this?

It is a fair question. SaaS costs have gone up. Vertice estimated that SaaS spend reached $9,100 per employee in 2025, up from $7,900 in 2023. SaaStr has argued that a large share of recent SaaS revenue growth is coming from price increases rather than new customers. Every CFO has seen the same pattern: a tool starts cheap, becomes embedded in the workflow, then the renewal comes back 18% higher.

So yes, the instinct to build is rational.

But the make-vs-buy question has changed in a way that is easy to miss. AI has lowered the cost of making a prototype. It has not lowered the cost of owning a production system. In some cases, it has raised it, because more code is being created by people who do not have a clear plan for maintaining it.

The question is not “can we build this?”

The question is “do we want to be responsible for this software three years from now?”

The prototype is not the product

Most internal software projects look good at the beginning because the first demo captures only the happy path.

Let’s say a procurement team wants a tool to compare supplier quotes. The team has an Excel template. They ask IT for help. Someone builds a small app that lets suppliers upload quotes, normalizes line items, and flags the lowest cost by part number.

Pretty simple, right?

Now the real work starts.

One supplier quotes in kilograms and another in pounds. One includes freight and another excludes it. One supplier uses a parent-child part structure. One quote comes in with a minimum order quantity that breaks the unit economics. One supplier adds a tooling amortization charge over 18 months. One file is in German. One supplier submits a revised quote after the deadline, but the buyer wants to keep both versions because the audit trail matters.

The first version of the app handled the demo. The production version has to handle reality.

This is where professional software earns its keep. Not because vendors are magically smarter than internal teams. They are not. Professional software is valuable because the vendor has already lived through more edge cases than one company will see on its own.

A bug found by one customer becomes a fix for many customers. A security review done for one large customer improves the product for the next one. A workflow that breaks during one implementation gets hardened before the next deployment.

That shared learning curve is easy to undervalue when you are looking at a prototype. It is obvious when you are on call for the system.

AI made code cheaper. It did not make maintenance cheaper.

The strongest argument for building is that AI changes the labor curve.

A small internal team can now write more code than it could two years ago. A business analyst can sketch a workflow. A software engineer can scaffold an application quickly. A procurement ops person can generate scripts to clean messy supplier data. This is real.

But more code is not the same as more software.

The evidence so far is not comforting. Veracode reported in its 2025 State of Software Security work that 45% of AI-generated code samples failed security tests. Trend Micro researchers found 69 vulnerabilities across 15 vibe-coded test applications. Other studies have found AI-generated code with materially higher vulnerability rates than human-written code, more duplication, and more debugging work than expected.

That last point matters. The expensive part of internal software is usually not writing version one. It is debugging version nine, when the original author has moved teams, the business process has changed, and nobody remembers why the database has three different supplier ID fields.

Gartner estimates that maintenance consumes 55-80% of IT budgets. Software maintenance accounts for 50-80% of total cost of ownership.

Gartner has long estimated that maintenance consumes the majority of IT budgets, often cited in the 55% to 80% range. Software maintenance is commonly estimated at 50% to 80% of total ownership cost. Those numbers sound high until you look at the backlog of any large IT organization: migrations, security patches, permission issues, broken integrations, performance problems, audit requests, and “small” workflow changes that require a schema change.

AI helps with some of that. It can explain code. It can write tests. It can suggest fixes.

It also creates a new failure mode: code that looks plausible enough to ship before anyone understands it.

That is why developer trust in AI coding tools has become more complicated. Stack Overflow’s 2025 Developer Survey found that trust in AI-generated output remains low, with only 29% of developers reporting that they trust AI tools. Developers are not rejecting AI. They are learning where the boundary is.

The boundary is production ownership.

When building is the right answer

The case for buying professional software is not the same as saying every company should outsource every tool.

Some software should be built.

If the software is the product, build it. If the workflow is a core operating advantage, build it. If no vendor can understand the physics, constraints, or timing of the problem well enough, build it.

Back at Waymo, simulation was not a generic analytics tool. It was central to how the company evaluated autonomous driving behavior. You could not buy an off-the-shelf simulator that captured the specific agent behavior, sensor stack, scenario generation, and evaluation loops the team needed. The software was part of the company’s technical advantage.

At Tesla during Model 3 NPI, there were many cases where internal tooling made sense because the product, factory, and supply base were changing at the same time. A general-purpose tool often could not keep up with the rate of iteration. When the process itself is being invented, buying can force you into the wrong operating model.

That is the strongest counterargument to the buy case: vendors tend to encode yesterday’s common process. If you are doing something genuinely different, professional software can slow you down.

But that argument is narrower than people think.

Most companies are not building software because the workflow is uniquely strategic. They are building because the vendor quote felt high, the implementation looked annoying, or someone on the team got excited after a good AI-assisted demo.

Those are not bad reasons to investigate building. They are bad reasons to commit to owning a system indefinitely.

A useful test is this: if the software works perfectly, does it become part of how you beat competitors?

If yes, consider building.

If no, be careful. You may be signing up to maintain plumbing.

Buying has real downsides

The lazy version of the buy argument ignores the pain of buying software.

SaaS inflation is real. Vendor lock-in is real. Bad implementations are real. Some software gets sold by people who have never done the job the product claims to support. Some tools require so much configuration that the customer effectively becomes the systems integrator. Some vendors make data export painful because they know customers will leave if switching is easy.

There is also a more serious risk: buying software can create false distance from accountability.

The UK Post Office Horizon scandal is the extreme case. Horizon was an accounting and point-of-sale system built by Fujitsu and used by the Post Office. Software errors contributed to shortfalls that were blamed on subpostmasters. Hundreds of people were prosecuted, many lives were damaged, and the public inquiry has spent years unpacking what went wrong.

That case is not a normal SaaS implementation. It is much more severe. But it makes one point clearly: buying software does not remove responsibility for how the system behaves. If a company uses software to make decisions about money, people, quality, or compliance, the company still owns the consequences.

So the right make-vs-buy frame is not “vendors good, internal teams bad.”

It is more specific.

Buy when the vendor’s learning curve, security posture, support model, and product depth are better than what you can reasonably build and maintain. Build when the system encodes a capability that is central to your company’s advantage and cannot be purchased without giving up that advantage.

Everything else requires discipline.

The hidden question is who gets paged

Internal tools often fail because nobody wants to be the product manager after launch.

During the build phase, the project has energy. There is a Slack channel. The VP cares. IT has assigned engineers. The business team is responsive because they want the tool.

Six months later, the original sponsor has moved on. The engineer who wrote most of the code is working on a higher-priority project. The business team wants four changes because the workflow evolved. A supplier cannot log in. The API token expired. The dashboard numbers do not match finance.

Who owns it?

This question sounds operational, but it is the make-vs-buy question in plain English.

With a vendor, you still need an internal owner. You need someone who understands the workflow, manages configuration, pushes for adoption, handles vendor governance, and checks whether the product is doing what was promised.

But you do not need to own every incident. You do not need to patch every dependency. You do not need to staff weekend coverage for a tool that is important but not central to your engineering roadmap.

With internal software, you do.

This is where the cost model often lies. The build estimate includes two engineers for twelve weeks. It rarely includes support rotations, documentation, access reviews, penetration testing, data retention policies, disaster recovery, roadmap planning, onboarding materials, and the cost of business users losing trust when the system breaks twice in one month.

If the system touches suppliers, customers, payments, quality, or regulated records, that missing work is not optional.

A real failure mode: Knight Capital

Knight Capital is one of the clearest examples of software ownership going wrong.

On August 1, 2012, Knight deployed code related to its trading systems. A dormant function was accidentally activated on some servers. In roughly 45 minutes, the firm sent millions of erroneous orders into the market and lost about $440 million. The SEC later described failures in deployment controls, testing, and supervisory procedures.

This was not a vibe-coded procurement app. Knight was a sophisticated financial firm. That is exactly why the example matters.

The lesson is not that internal software is bad. Trading firms often should build trading systems. The lesson is that production software is an operating commitment. Deployment discipline, monitoring, rollback plans, change management, and ownership structures matter as much as the code.

AI does not remove those requirements.

If anything, AI can make them easier to ignore because the first version arrives faster.

A better make-vs-buy test

Most make-vs-buy discussions start with feature fit and license cost. Those matter, but they are not enough.

I would ask seven questions.

1. Is this workflow strategic or just specific?

Every company has weird workflows. Specific does not mean strategic.

A procurement approval matrix with unusual thresholds may feel unique. It probably is not a source of competitive advantage. A simulation environment for autonomous driving behavior might be.

The more strategic the workflow, the stronger the build case.

2. Will the requirements change because the company is learning?

If the process is still being discovered, building may be better. Early NPI work often falls into this bucket. The team is learning what matters, what data exists, and how decisions should be made.

If the process is mature and the company mainly needs reliability, auditability, and adoption, buying usually looks better.

3. Do we have the team to maintain it after the exciting part?

Do not ask whether the team can build it. Ask whether the team can maintain it when nobody is excited anymore.

That includes engineering, product management, security, support, documentation, and user training.

4. Does the vendor see more edge cases than we do?

This is one of the best reasons to buy.

A procurement software vendor working with hundreds of manufacturers will see more supplier quote formats, ERP integration patterns, commodity structures, approval workflows, and sourcing events than one manufacturer will see alone.

That does not make the vendor right about every workflow. It means the product should contain lessons the customer does not have to learn the hard way.

5. What happens to the data?

Owning the software and owning the data are different things.

A company can buy software and still negotiate data rights, export access, retention rules, and restrictions on model training. A company can also build internal software and end up with worse data because every field was created in a hurry and nobody maintained the schema.

The right question is not “where does the code live?” It is “can we trust, access, govern, and reuse the data?”

6. What is the cost of being wrong?

If an internal lunch-ordering app breaks, people get annoyed. If a supplier quote comparison tool mishandles currency conversion or freight terms, the company can award business incorrectly. If a quality system loses traceability, the cost can be much higher.

Higher consequence workflows favor professional software unless the company has a clear reason to build and the operating maturity to support it.

7. Can we leave?

This question applies to both paths.

If you buy, can you export your data and move to another vendor? If you build, can the company keep the system alive if two key engineers leave?

Lock-in is not only a vendor problem. Internal software can lock a company into tribal knowledge.

Procurement is a good example because the edge cases are the product

Direct materials procurement looks simple from far away.

Run an RFQ. Compare bids. Pick a supplier. Track savings.

The real work is messier. Buyers need to compare quotes across currencies, units of measure, incoterms, tooling assumptions, freight terms, capacity constraints, lead times, payment terms, and engineering changes. They need to coordinate with engineering, quality, finance, and suppliers. They need to preserve enough history to explain why a decision was made months later.

This is the type of workflow where AI-assisted internal tools can create a convincing demo quickly. Upload three supplier quotes and summarize the differences. Great.

But the value is in the next hundred details: quote normalization, version control, supplier communication, approval routing, should-cost context, ERP handoff, audit history, and the ability to handle the strange cases that show up during a real sourcing event.

LightSource works on this problem for manufacturers buying direct materials. The product helps teams manage sourcing events, compare supplier quotes, track cost drivers, and keep supplier communication tied to the parts and programs it belongs to. For challenger manufacturers trying to move quickly through NPI, the goal is not to add another system for its own sake. It is to reduce the amount of learning that happens through email threads, spreadsheets, and late surprises.

That is where buying can make sense. Not because procurement teams cannot build tools. Many can. The question is whether they want their scarce engineering and IT capacity spent rebuilding the same production details that a focused vendor has already had to solve.

The better answer is not “make” or “buy”

The best companies will do both.

They will buy professional software for common but consequential workflows where reliability, support, security, and accumulated edge cases matter. They will build where the workflow is genuinely strategic or too new for the market to serve well. They will use AI aggressively for prototypes, internal automation, data cleanup, testing, documentation, and glue code.

But they will not confuse a prototype with a product.

That distinction matters more in the age of AI, not less. When building software becomes easier, the number of internal tools will grow. Some will be useful. Some will become quiet liabilities. The companies that do well will have a sharper governance muscle around which tools deserve production ownership.

A good make-vs-buy decision should feel less like a technology preference and more like an operating decision.

Who owns the roadmap?

Who fixes the bug?

Who answers the audit question?

Who gets paged?

If those answers are not clear, the software is not cheap. It is just early.

Sources

Vertice SaaS Inflation Index -- SaaS pricing research and benchmarks on rising software spend per employee.

SaaStr: SaaS growth and price increases -- SaaStr analysis and commentary on SaaS revenue growth, pricing, and renewal pressure.

Veracode State of Software Security -- Research on software security, including findings related to AI-generated code and application risk.

Trend Micro research on vibe coding risks -- Security research on vulnerabilities found in AI-assisted and vibe-coded applications.

Stack Overflow Developer Survey 2025 -- Developer survey data on AI tool usage, trust, and sentiment.

Gartner IT budget and maintenance research -- Gartner research and advisory work on IT spending, technical debt, and maintenance burden.

SEC administrative proceeding on Knight Capital Americas LLC -- SEC order describing Knight Capital’s 2012 trading incident, controls failures, and financial loss.

UK Post Office Horizon IT Inquiry -- Public inquiry materials on the Horizon system, Fujitsu, the Post Office, and the impact on subpostmasters.

Frequently Asked Questions

What does make vs. buy mean in software?

Make vs. buy is the decision to build software internally or purchase it from an external vendor. In the age of AI, the build option looks easier because teams can create prototypes quickly, but the full decision still has to include maintenance, security, support, data governance, and long-term ownership.

How has AI changed the make-vs-buy decision?

AI has reduced the cost and time required to create a working software prototype. It has not removed the need for production engineering, testing, monitoring, security reviews, documentation, user support, and roadmap ownership. That makes the distinction between prototype and production more important.

When should a company build software instead of buying it?

A company should build when the software directly supports a strategic advantage, when no vendor can meet the requirements, or when the workflow is still being discovered. Examples include proprietary simulation systems, product-specific engineering tools, or operating systems tied closely to how the company competes.

When should a company buy professional software?

A company should buy professional software when the workflow is common enough that a vendor has deeper experience, broader edge-case coverage, and a better support model than the company can justify internally. This is especially true for consequential workflows involving suppliers, financial decisions, audit trails, quality records, or regulated data.

What is the biggest hidden cost of building internal software?

The biggest hidden cost is long-term ownership. Internal software needs bug fixes, security patches, documentation, access control, testing, integrations, user support, and a product roadmap. The initial build may be cheap, but the maintenance burden often lasts for years.

How do you measure the total cost of ownership for internally built software?

Total cost of ownership includes the initial build, ongoing engineering for bug fixes and feature additions, security patching, infrastructure, documentation, user support, and the opportunity cost of engineers who could be working on the product. A useful rule of thumb is that the initial build is roughly 20-30% of the lifetime cost over five years; the remainder is maintenance, support, and modernization as adjacent systems change. Most teams underestimate the maintenance share.

Do AI coding assistants change the make-vs-buy calculation by lowering the build cost?

They lower the prototype cost, not the production cost. AI assistants accelerate the writing of the first working version, but the production-readiness work -- testing, security, monitoring, edge-case handling, and long-term ownership -- is largely unchanged. If anything, they make it easier to confuse a working prototype with a production system, which is the more common mistake.

AI has made the first week of building software look much cheaper than it really is.

That is the trap.

A buyer, engineer, or IT team can now sit down with Cursor, Replit, Lovable, Claude, or GitHub Copilot and get a working prototype in a few days. The app has login. It has a dashboard. It connects to a spreadsheet. It summarizes supplier quotes. It can probably generate a chart that looks good in the Monday staff meeting.

Then someone asks the natural question: why are we paying a vendor six figures for this?

It is a fair question. SaaS costs have gone up. Vertice estimated that SaaS spend reached $9,100 per employee in 2025, up from $7,900 in 2023. SaaStr has argued that a large share of recent SaaS revenue growth is coming from price increases rather than new customers. Every CFO has seen the same pattern: a tool starts cheap, becomes embedded in the workflow, then the renewal comes back 18% higher.

So yes, the instinct to build is rational.

But the make-vs-buy question has changed in a way that is easy to miss. AI has lowered the cost of making a prototype. It has not lowered the cost of owning a production system. In some cases, it has raised it, because more code is being created by people who do not have a clear plan for maintaining it.

The question is not “can we build this?”

The question is “do we want to be responsible for this software three years from now?”

The prototype is not the product

Most internal software projects look good at the beginning because the first demo captures only the happy path.

Let’s say a procurement team wants a tool to compare supplier quotes. The team has an Excel template. They ask IT for help. Someone builds a small app that lets suppliers upload quotes, normalizes line items, and flags the lowest cost by part number.

Pretty simple, right?

Now the real work starts.

One supplier quotes in kilograms and another in pounds. One includes freight and another excludes it. One supplier uses a parent-child part structure. One quote comes in with a minimum order quantity that breaks the unit economics. One supplier adds a tooling amortization charge over 18 months. One file is in German. One supplier submits a revised quote after the deadline, but the buyer wants to keep both versions because the audit trail matters.

The first version of the app handled the demo. The production version has to handle reality.

This is where professional software earns its keep. Not because vendors are magically smarter than internal teams. They are not. Professional software is valuable because the vendor has already lived through more edge cases than one company will see on its own.

A bug found by one customer becomes a fix for many customers. A security review done for one large customer improves the product for the next one. A workflow that breaks during one implementation gets hardened before the next deployment.

That shared learning curve is easy to undervalue when you are looking at a prototype. It is obvious when you are on call for the system.

AI made code cheaper. It did not make maintenance cheaper.

The strongest argument for building is that AI changes the labor curve.

A small internal team can now write more code than it could two years ago. A business analyst can sketch a workflow. A software engineer can scaffold an application quickly. A procurement ops person can generate scripts to clean messy supplier data. This is real.

But more code is not the same as more software.

The evidence so far is not comforting. Veracode reported in its 2025 State of Software Security work that 45% of AI-generated code samples failed security tests. Trend Micro researchers found 69 vulnerabilities across 15 vibe-coded test applications. Other studies have found AI-generated code with materially higher vulnerability rates than human-written code, more duplication, and more debugging work than expected.

That last point matters. The expensive part of internal software is usually not writing version one. It is debugging version nine, when the original author has moved teams, the business process has changed, and nobody remembers why the database has three different supplier ID fields.

Gartner estimates that maintenance consumes 55-80% of IT budgets. Software maintenance accounts for 50-80% of total cost of ownership.

Gartner has long estimated that maintenance consumes the majority of IT budgets, often cited in the 55% to 80% range. Software maintenance is commonly estimated at 50% to 80% of total ownership cost. Those numbers sound high until you look at the backlog of any large IT organization: migrations, security patches, permission issues, broken integrations, performance problems, audit requests, and “small” workflow changes that require a schema change.

AI helps with some of that. It can explain code. It can write tests. It can suggest fixes.

It also creates a new failure mode: code that looks plausible enough to ship before anyone understands it.

That is why developer trust in AI coding tools has become more complicated. Stack Overflow’s 2025 Developer Survey found that trust in AI-generated output remains low, with only 29% of developers reporting that they trust AI tools. Developers are not rejecting AI. They are learning where the boundary is.

The boundary is production ownership.

When building is the right answer

The case for buying professional software is not the same as saying every company should outsource every tool.

Some software should be built.

If the software is the product, build it. If the workflow is a core operating advantage, build it. If no vendor can understand the physics, constraints, or timing of the problem well enough, build it.

Back at Waymo, simulation was not a generic analytics tool. It was central to how the company evaluated autonomous driving behavior. You could not buy an off-the-shelf simulator that captured the specific agent behavior, sensor stack, scenario generation, and evaluation loops the team needed. The software was part of the company’s technical advantage.

At Tesla during Model 3 NPI, there were many cases where internal tooling made sense because the product, factory, and supply base were changing at the same time. A general-purpose tool often could not keep up with the rate of iteration. When the process itself is being invented, buying can force you into the wrong operating model.

That is the strongest counterargument to the buy case: vendors tend to encode yesterday’s common process. If you are doing something genuinely different, professional software can slow you down.

But that argument is narrower than people think.

Most companies are not building software because the workflow is uniquely strategic. They are building because the vendor quote felt high, the implementation looked annoying, or someone on the team got excited after a good AI-assisted demo.

Those are not bad reasons to investigate building. They are bad reasons to commit to owning a system indefinitely.

A useful test is this: if the software works perfectly, does it become part of how you beat competitors?

If yes, consider building.

If no, be careful. You may be signing up to maintain plumbing.

Buying has real downsides

The lazy version of the buy argument ignores the pain of buying software.

SaaS inflation is real. Vendor lock-in is real. Bad implementations are real. Some software gets sold by people who have never done the job the product claims to support. Some tools require so much configuration that the customer effectively becomes the systems integrator. Some vendors make data export painful because they know customers will leave if switching is easy.

There is also a more serious risk: buying software can create false distance from accountability.

The UK Post Office Horizon scandal is the extreme case. Horizon was an accounting and point-of-sale system built by Fujitsu and used by the Post Office. Software errors contributed to shortfalls that were blamed on subpostmasters. Hundreds of people were prosecuted, many lives were damaged, and the public inquiry has spent years unpacking what went wrong.

That case is not a normal SaaS implementation. It is much more severe. But it makes one point clearly: buying software does not remove responsibility for how the system behaves. If a company uses software to make decisions about money, people, quality, or compliance, the company still owns the consequences.

So the right make-vs-buy frame is not “vendors good, internal teams bad.”

It is more specific.

Buy when the vendor’s learning curve, security posture, support model, and product depth are better than what you can reasonably build and maintain. Build when the system encodes a capability that is central to your company’s advantage and cannot be purchased without giving up that advantage.

Everything else requires discipline.

The hidden question is who gets paged

Internal tools often fail because nobody wants to be the product manager after launch.

During the build phase, the project has energy. There is a Slack channel. The VP cares. IT has assigned engineers. The business team is responsive because they want the tool.

Six months later, the original sponsor has moved on. The engineer who wrote most of the code is working on a higher-priority project. The business team wants four changes because the workflow evolved. A supplier cannot log in. The API token expired. The dashboard numbers do not match finance.

Who owns it?

This question sounds operational, but it is the make-vs-buy question in plain English.

With a vendor, you still need an internal owner. You need someone who understands the workflow, manages configuration, pushes for adoption, handles vendor governance, and checks whether the product is doing what was promised.

But you do not need to own every incident. You do not need to patch every dependency. You do not need to staff weekend coverage for a tool that is important but not central to your engineering roadmap.

With internal software, you do.

This is where the cost model often lies. The build estimate includes two engineers for twelve weeks. It rarely includes support rotations, documentation, access reviews, penetration testing, data retention policies, disaster recovery, roadmap planning, onboarding materials, and the cost of business users losing trust when the system breaks twice in one month.

If the system touches suppliers, customers, payments, quality, or regulated records, that missing work is not optional.

A real failure mode: Knight Capital

Knight Capital is one of the clearest examples of software ownership going wrong.

On August 1, 2012, Knight deployed code related to its trading systems. A dormant function was accidentally activated on some servers. In roughly 45 minutes, the firm sent millions of erroneous orders into the market and lost about $440 million. The SEC later described failures in deployment controls, testing, and supervisory procedures.

This was not a vibe-coded procurement app. Knight was a sophisticated financial firm. That is exactly why the example matters.

The lesson is not that internal software is bad. Trading firms often should build trading systems. The lesson is that production software is an operating commitment. Deployment discipline, monitoring, rollback plans, change management, and ownership structures matter as much as the code.

AI does not remove those requirements.

If anything, AI can make them easier to ignore because the first version arrives faster.

A better make-vs-buy test

Most make-vs-buy discussions start with feature fit and license cost. Those matter, but they are not enough.

I would ask seven questions.

1. Is this workflow strategic or just specific?

Every company has weird workflows. Specific does not mean strategic.

A procurement approval matrix with unusual thresholds may feel unique. It probably is not a source of competitive advantage. A simulation environment for autonomous driving behavior might be.

The more strategic the workflow, the stronger the build case.

2. Will the requirements change because the company is learning?

If the process is still being discovered, building may be better. Early NPI work often falls into this bucket. The team is learning what matters, what data exists, and how decisions should be made.

If the process is mature and the company mainly needs reliability, auditability, and adoption, buying usually looks better.

3. Do we have the team to maintain it after the exciting part?

Do not ask whether the team can build it. Ask whether the team can maintain it when nobody is excited anymore.

That includes engineering, product management, security, support, documentation, and user training.

4. Does the vendor see more edge cases than we do?

This is one of the best reasons to buy.

A procurement software vendor working with hundreds of manufacturers will see more supplier quote formats, ERP integration patterns, commodity structures, approval workflows, and sourcing events than one manufacturer will see alone.

That does not make the vendor right about every workflow. It means the product should contain lessons the customer does not have to learn the hard way.

5. What happens to the data?

Owning the software and owning the data are different things.

A company can buy software and still negotiate data rights, export access, retention rules, and restrictions on model training. A company can also build internal software and end up with worse data because every field was created in a hurry and nobody maintained the schema.

The right question is not “where does the code live?” It is “can we trust, access, govern, and reuse the data?”

6. What is the cost of being wrong?

If an internal lunch-ordering app breaks, people get annoyed. If a supplier quote comparison tool mishandles currency conversion or freight terms, the company can award business incorrectly. If a quality system loses traceability, the cost can be much higher.

Higher consequence workflows favor professional software unless the company has a clear reason to build and the operating maturity to support it.

7. Can we leave?

This question applies to both paths.

If you buy, can you export your data and move to another vendor? If you build, can the company keep the system alive if two key engineers leave?

Lock-in is not only a vendor problem. Internal software can lock a company into tribal knowledge.

Procurement is a good example because the edge cases are the product

Direct materials procurement looks simple from far away.

Run an RFQ. Compare bids. Pick a supplier. Track savings.

The real work is messier. Buyers need to compare quotes across currencies, units of measure, incoterms, tooling assumptions, freight terms, capacity constraints, lead times, payment terms, and engineering changes. They need to coordinate with engineering, quality, finance, and suppliers. They need to preserve enough history to explain why a decision was made months later.

This is the type of workflow where AI-assisted internal tools can create a convincing demo quickly. Upload three supplier quotes and summarize the differences. Great.

But the value is in the next hundred details: quote normalization, version control, supplier communication, approval routing, should-cost context, ERP handoff, audit history, and the ability to handle the strange cases that show up during a real sourcing event.

LightSource works on this problem for manufacturers buying direct materials. The product helps teams manage sourcing events, compare supplier quotes, track cost drivers, and keep supplier communication tied to the parts and programs it belongs to. For challenger manufacturers trying to move quickly through NPI, the goal is not to add another system for its own sake. It is to reduce the amount of learning that happens through email threads, spreadsheets, and late surprises.

That is where buying can make sense. Not because procurement teams cannot build tools. Many can. The question is whether they want their scarce engineering and IT capacity spent rebuilding the same production details that a focused vendor has already had to solve.

The better answer is not “make” or “buy”

The best companies will do both.

They will buy professional software for common but consequential workflows where reliability, support, security, and accumulated edge cases matter. They will build where the workflow is genuinely strategic or too new for the market to serve well. They will use AI aggressively for prototypes, internal automation, data cleanup, testing, documentation, and glue code.

But they will not confuse a prototype with a product.

That distinction matters more in the age of AI, not less. When building software becomes easier, the number of internal tools will grow. Some will be useful. Some will become quiet liabilities. The companies that do well will have a sharper governance muscle around which tools deserve production ownership.

A good make-vs-buy decision should feel less like a technology preference and more like an operating decision.

Who owns the roadmap?

Who fixes the bug?

Who answers the audit question?

Who gets paged?

If those answers are not clear, the software is not cheap. It is just early.

Sources

Vertice SaaS Inflation Index -- SaaS pricing research and benchmarks on rising software spend per employee.

SaaStr: SaaS growth and price increases -- SaaStr analysis and commentary on SaaS revenue growth, pricing, and renewal pressure.

Veracode State of Software Security -- Research on software security, including findings related to AI-generated code and application risk.

Trend Micro research on vibe coding risks -- Security research on vulnerabilities found in AI-assisted and vibe-coded applications.

Stack Overflow Developer Survey 2025 -- Developer survey data on AI tool usage, trust, and sentiment.

Gartner IT budget and maintenance research -- Gartner research and advisory work on IT spending, technical debt, and maintenance burden.

SEC administrative proceeding on Knight Capital Americas LLC -- SEC order describing Knight Capital’s 2012 trading incident, controls failures, and financial loss.

UK Post Office Horizon IT Inquiry -- Public inquiry materials on the Horizon system, Fujitsu, the Post Office, and the impact on subpostmasters.

Frequently Asked Questions

What does make vs. buy mean in software?

Make vs. buy is the decision to build software internally or purchase it from an external vendor. In the age of AI, the build option looks easier because teams can create prototypes quickly, but the full decision still has to include maintenance, security, support, data governance, and long-term ownership.

How has AI changed the make-vs-buy decision?

AI has reduced the cost and time required to create a working software prototype. It has not removed the need for production engineering, testing, monitoring, security reviews, documentation, user support, and roadmap ownership. That makes the distinction between prototype and production more important.

When should a company build software instead of buying it?

A company should build when the software directly supports a strategic advantage, when no vendor can meet the requirements, or when the workflow is still being discovered. Examples include proprietary simulation systems, product-specific engineering tools, or operating systems tied closely to how the company competes.

When should a company buy professional software?

A company should buy professional software when the workflow is common enough that a vendor has deeper experience, broader edge-case coverage, and a better support model than the company can justify internally. This is especially true for consequential workflows involving suppliers, financial decisions, audit trails, quality records, or regulated data.

What is the biggest hidden cost of building internal software?

The biggest hidden cost is long-term ownership. Internal software needs bug fixes, security patches, documentation, access control, testing, integrations, user support, and a product roadmap. The initial build may be cheap, but the maintenance burden often lasts for years.

How do you measure the total cost of ownership for internally built software?

Total cost of ownership includes the initial build, ongoing engineering for bug fixes and feature additions, security patching, infrastructure, documentation, user support, and the opportunity cost of engineers who could be working on the product. A useful rule of thumb is that the initial build is roughly 20-30% of the lifetime cost over five years; the remainder is maintenance, support, and modernization as adjacent systems change. Most teams underestimate the maintenance share.

Do AI coding assistants change the make-vs-buy calculation by lowering the build cost?

They lower the prototype cost, not the production cost. AI assistants accelerate the writing of the first working version, but the production-readiness work -- testing, security, monitoring, edge-case handling, and long-term ownership -- is largely unchanged. If anything, they make it easier to confuse a working prototype with a production system, which is the more common mistake.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Trusted by:

Trusted by:

Trusted by:

*GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally, and COOL VENDORS is a registered trademark of Gartner, Inc. and/or its affiliates and are used herein with permission. All rights reserved. Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.