Digital Twins: From Tesla to Waymo to Your Supply Chain

Spencer Penn

The term "digital twin" has been in circulation long enough that it's started to mean everything and nothing. Vendors use it to describe anything from a 3D model of a building to a real-time dashboard with a fancy UI. Gartner predicts the market will hit $183 billion by 2031. But the most honest thing you can say about digital twins in 2026 is that most implementations don't work very well, and the ones that do share a specific set of characteristics that the ones that don't are missing.

I've spent the last decade working with digital twins at three very different companies -- Tesla, Waymo, and now LightSource -- and the gap between the implementations that changed how we operated and the ones that gathered dust was always the same gap. It wasn't a technology problem. It was a fidelity problem.

What a Digital Twin Actually Is

A digital twin is a software model of a real-world system that's accurate enough to make decisions against. That's it. The system might be a vehicle, a factory line, a driving environment, a supply chain, or a single machined part. The model might run in real time or in batch. It might be built from physics simulations, sensor data, or both.

The key word in the definition is "accurate enough." A 3D visualization that looks like the factory floor but doesn't reflect current cycle times is not a digital twin. A spreadsheet model that reflects current costs but can't simulate a change is not a digital twin. The threshold is: can you make a decision based on this model that you'd otherwise need a physical test to validate? If yes, you have a digital twin. If no, you have a rendering.

What Makes a Digital Twin Work

The implementations I've seen succeed -- and the ones I've seen fail -- differ on three axes.

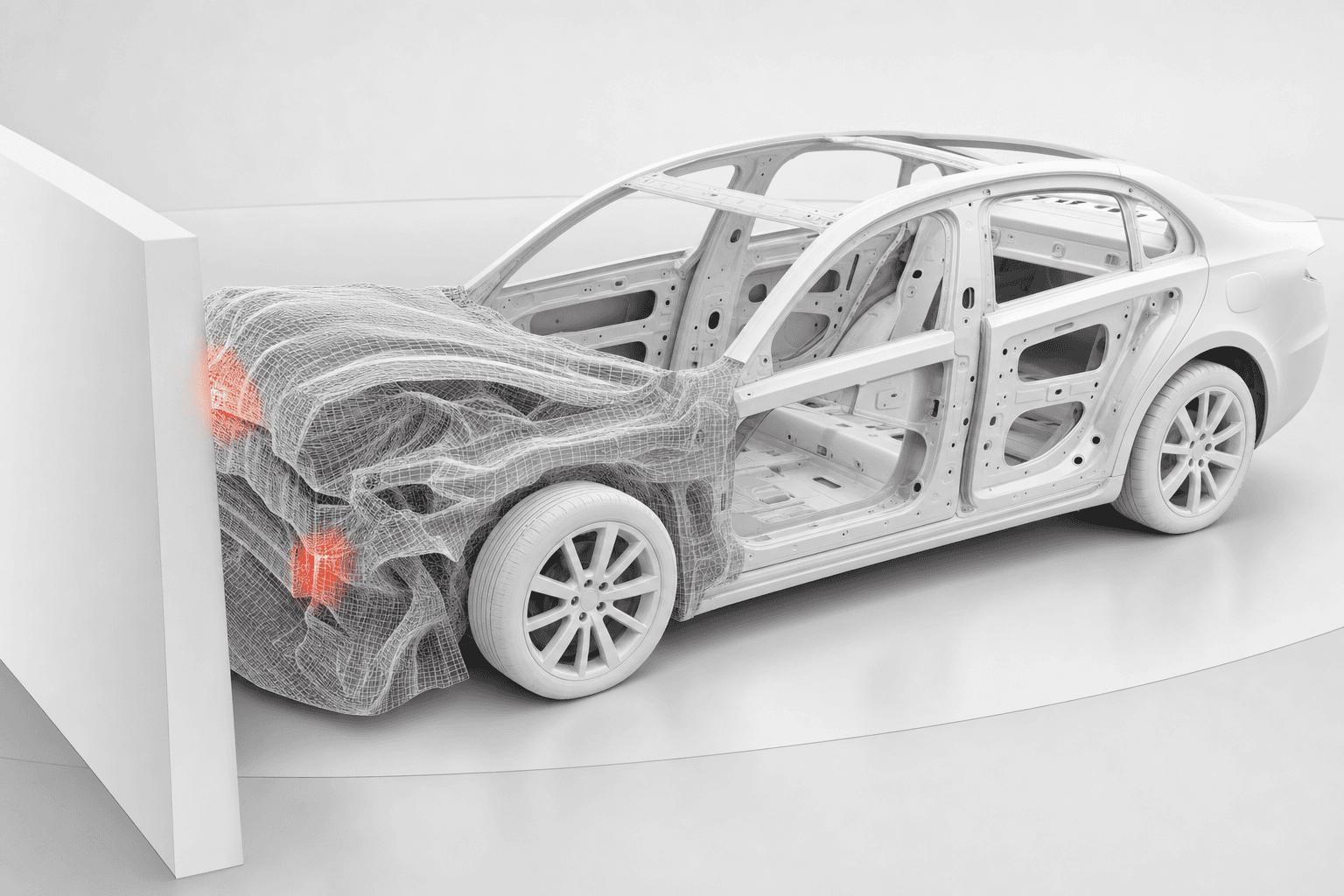

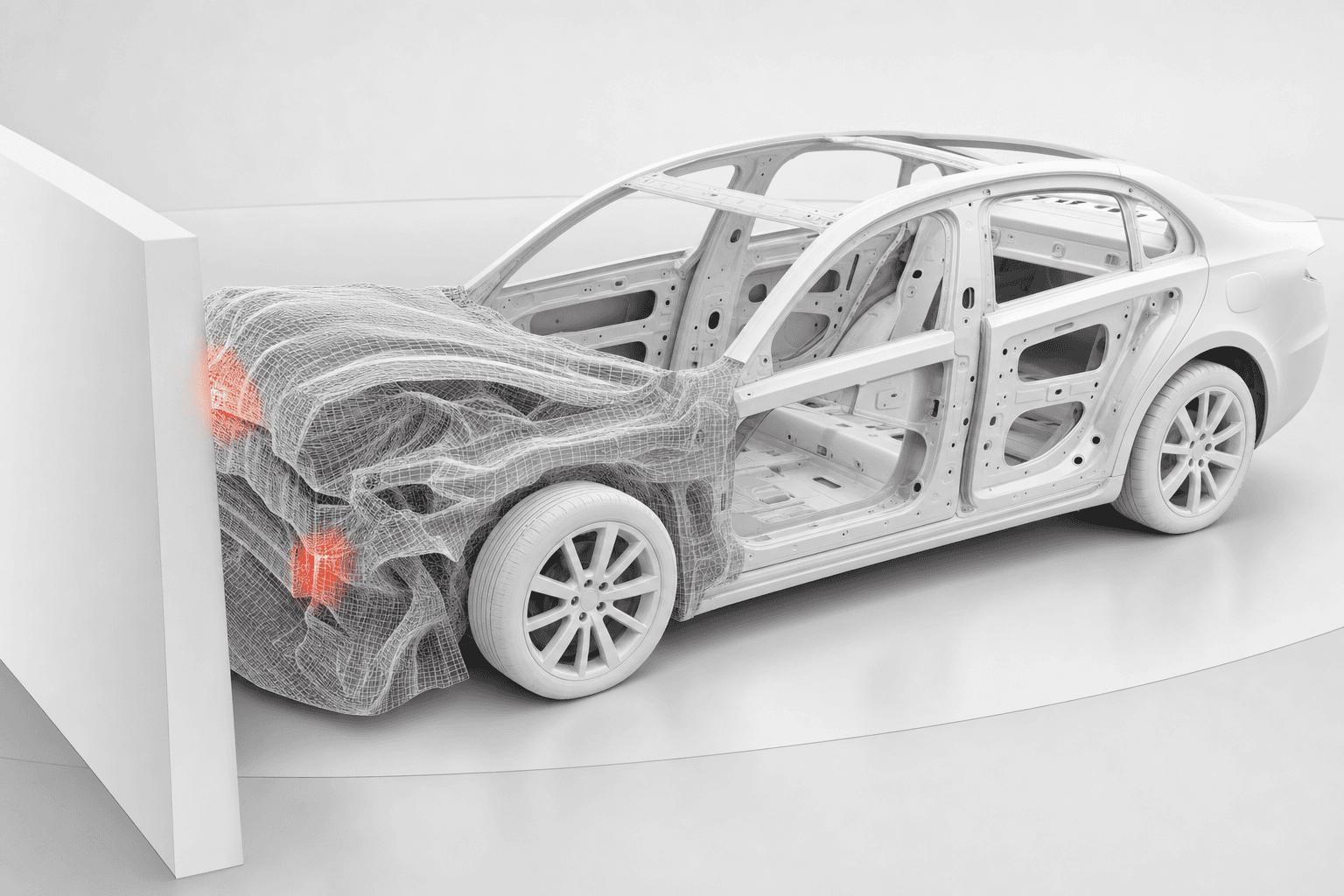

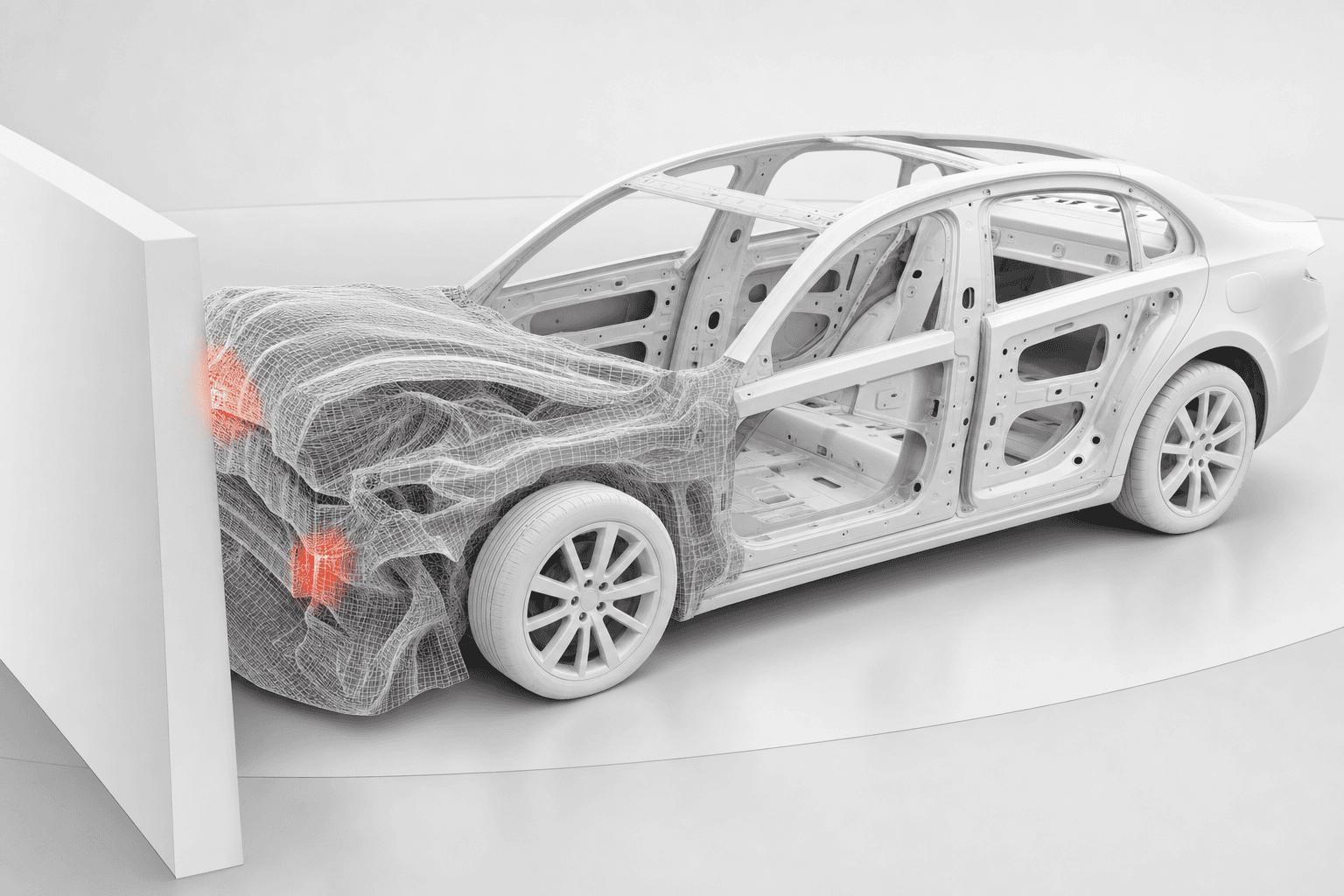

Fidelity to the current state of the physical system. A digital twin that was accurate six months ago and hasn't been updated since is the most common failure mode in manufacturing. The physical system drifts -- new tooling, new suppliers, different material lots, updated software -- and the model doesn't follow. At Waymo, we solved this by continuously ingesting real-world sensor data. At Tesla, the crash simulation models were updated with every design revision. The twin has to track the thing it's twinning, or the gap between model and reality grows until the model is useless.

Iteration volume. The value of a digital twin is proportional to how many scenarios you can run through it. One simulation proves nothing. A thousand simulations start to reveal patterns. A million simulations, drawn from real-world distributions, give you statistical confidence. The organizations that get the most from digital twins are the ones that treat them as high-throughput testing environments, not as one-off visualization tools.

Closed-loop feedback. The best digital twins feed results back into the physical system. That same loop -- sense, decide, rebuild, repeat -- is becoming the decisive edge in modern war. A crash simulation that changes the structural design. A driving simulation that updates the autonomy software. A cost model that changes the sourcing decision. If the twin doesn't close the loop -- if insights sit in a report that nobody acts on -- the investment doesn't compound.

Three Real-World Examples

Tesla: The Factory as a Digital Twin

At Tesla, the factory was the product as much as the vehicle was. The layout of the Gigafactories, the flow of materials, and the programming of the robots were all modeled extensively before the physical factory was built. Teams ran thousands of crash simulations and iterated on structural designs before building a single physical prototype -- each simulation cost minutes of compute time, while each physical crash test cost hundreds of thousands of dollars and weeks of lead time.

During the Model 3 program, the pace of iteration on the General Assembly line, on battery pack design, on motor geometry was accelerated by the ability to test virtually before committing to tooling. When you're trying to hit 5,000 units per week on an aggressive timeline, you don't learn by crashing physical prototypes. You learn by running the model until you're confident, then you cut metal. That's what let the team compress the NPI cycle from years to months.

LightSource's CEO and co-founder Spencer Penn worked on parts of this program at Tesla.

Waymo: 20 Million Miles a Day

At Waymo, I led the simulation organization. The digital twin was a virtual replica of real-world driving environments, built from millions of miles of recorded sensor data. By 2019, Waymo had driven over 10 billion miles in simulation. The system ran roughly 20 million miles of simulated driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

Waymo's simulation system runs roughly 20 million miles of virtual driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

The use case was precise. If a vehicle failed to stop at a stop sign in a specific recorded scenario, we could replay that exact situation against updated software, then evaluate the fix across hundreds of thousands of similar situations to confirm that overall performance improved without introducing regressions. No fleet of physical vehicles could match that volume. No amount of on-road testing could reach the tail of edge cases that simulation covered routinely.

The lesson that applies beyond self-driving: the value of a digital twin scales with the diversity of scenarios you can run through it. Waymo didn't simulate 20 million miles a day because more miles sounded impressive. They did it because the long tail of rare driving scenarios is where the system fails, and you can only find those failures at volume.

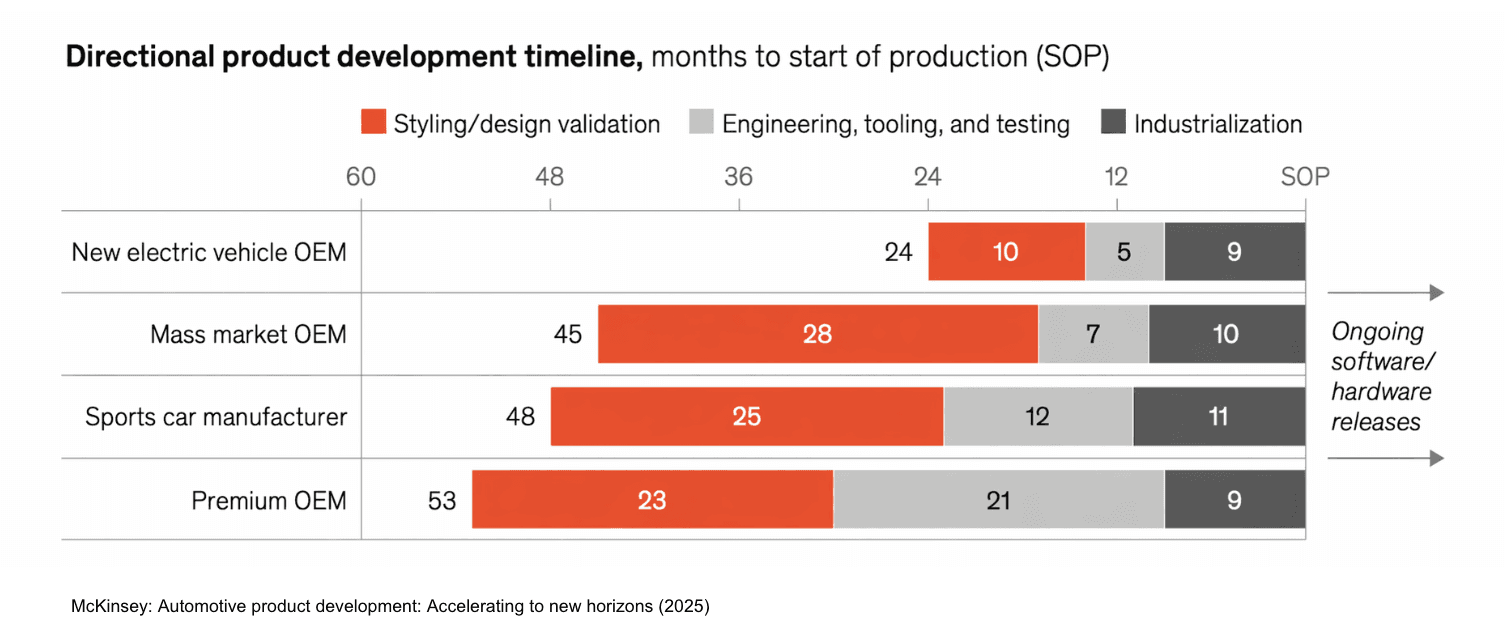

Chinese Automotive OEMs: 65% Virtual Testing

According to McKinsey, Chinese automotive OEMs now conduct roughly 65% of their testing through simulation, compared to 40-50% at OEMs in other regions. Three-quarters of those tests are highly automated. McKinsey estimates that maximizing this virtual testing approach can cut in half the number of physical prototypes required.

Chinese automotive OEMs conduct roughly 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut physical prototypes required in half. -- McKinsey, 2025

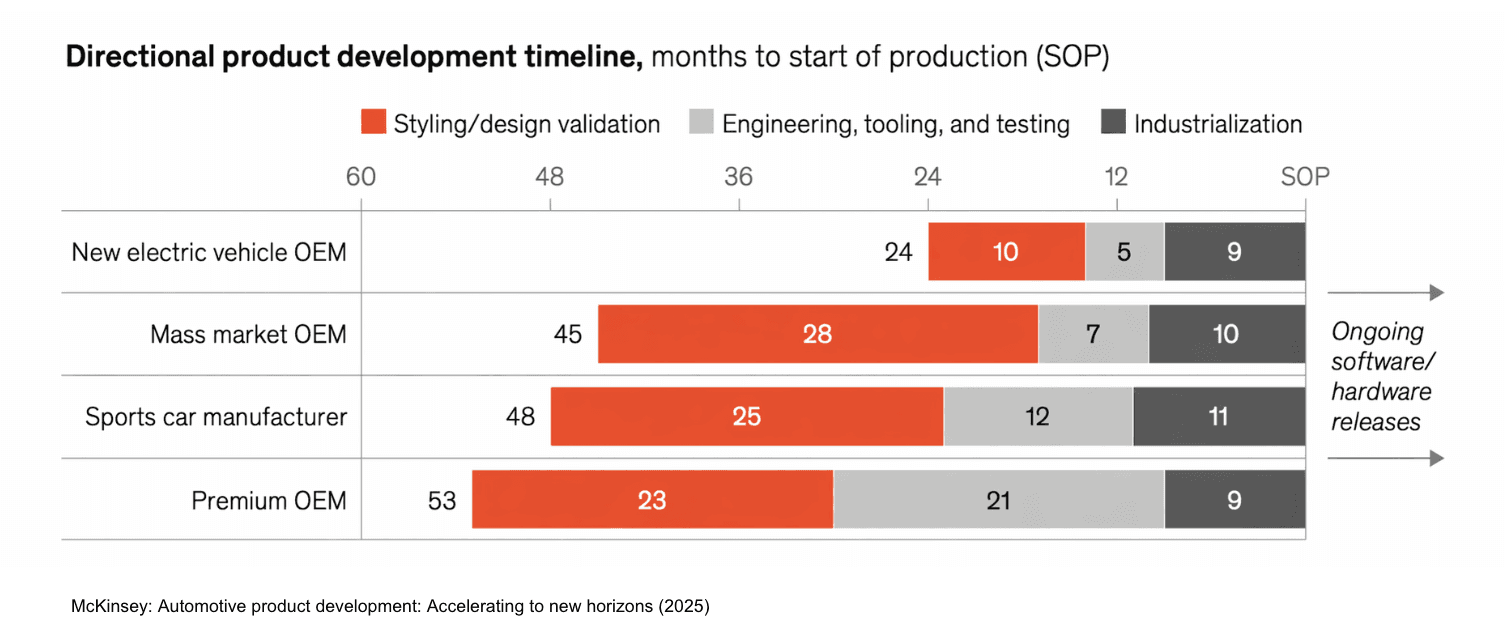

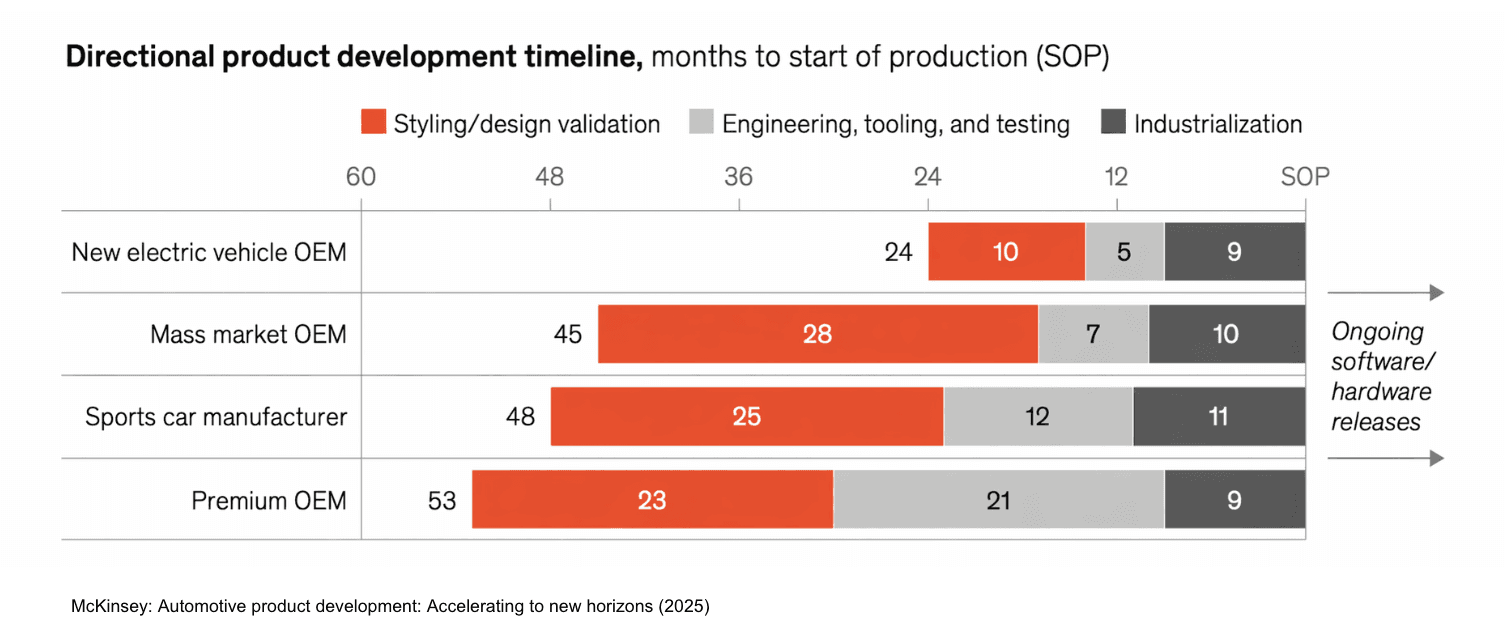

This isn't just a cost story. It's a speed story. The Chinese OEMs that are compressing vehicle development timelines from five years to two are doing it in large part by shifting testing upstream into virtual environments. Physical prototyping is the bottleneck. The companies that reduce their dependence on it move faster.

Where This Is Going -- and Where LightSource Fits

The pattern across these examples is consistent: the companies that build high-fidelity digital twins and run them at volume get to iterate faster, fail cheaper, and ship sooner. The ones that treat digital twins as visualization projects or one-off analyses don't.

The most interesting frontier is extending digital twins beyond the product itself and into the supply chain. Today, most companies model the physical product in simulation but treat procurement, cost modeling, and supplier networks as separate, largely manual processes. The opportunity is to connect the product twin to the supply chain twin -- so that when an engineer changes a material spec or a geometry, the cost implications, supplier availability, and lead time impacts are visible immediately, not six weeks later when the sourcing team gets around to re-quoting.

This is where the kind of hardware companies that use LightSource -- challenger manufacturers running aggressive NPI timelines, trying to out-iterate larger incumbents -- are starting to apply digital twin thinking to their procurement process. They model BOMs, costs, and supply networks on the platform and run scenarios against real supplier data. What happens to landed cost if we shift this part from a casting to a stamping? What if we dual-source this component? What does the cost curve look like if tariffs on this country of origin increase by 15%?

The goal is the same one that worked at Tesla and Waymo: compress the iteration cycle, reduce the cost of learning, and make better decisions faster by testing virtually before committing physically. It applies to vehicle dynamics and autonomous driving. It also applies to whether your BOM is going to cost what you think it will.

Sources

Waymo's cars drive 10 million miles a day in a perilous virtual world -- MIT Technology Review on Waymo's simulation infrastructure and daily mileage

Waymo has now driven 10 billion autonomous miles in simulation -- TechCrunch reporting on Waymo's cumulative simulation milestone

Simulation City: Waymo's most advanced simulation system -- Waymo's blog on their simulation platform architecture

Automotive product development: Accelerating to new horizons -- McKinsey on Chinese OEMs conducting 65% of testing through simulation

Digital Twins in Manufacturing: Separating Hype from Reality -- WWT on common failure modes in digital twin implementations

Manufacturing Predicts 2026: Digital Twins, AI Agents, and Autonomous Operations -- Gartner on digital twin market reaching $183 billion by 2031

Frequently Asked Questions

What is a digital twin in manufacturing?

A digital twin is a software model of a physical system -- a factory, a vehicle, a supply chain -- that is accurate enough to test decisions against before making them in the real world. It can range from a physics-based simulation of crash performance to a cost model of a bill of materials, as long as the model reflects the current state of the physical system it represents.

Why do most digital twin implementations fail?

The most common failure mode is a model that was accurate when it was built but hasn't been updated as the physical system changed. Digital twins require continuous data ingestion to stay aligned with reality. Other failure modes include low iteration volume (using the twin for one-off visualizations instead of high-throughput testing) and lack of closed-loop feedback (insights from the model never making it back into the physical process).

How does virtual testing compare to physical prototyping in automotive?

McKinsey reports that leading Chinese automotive OEMs now conduct about 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut the number of physical prototypes required in half. Physical prototyping remains necessary for final validation, but shifting testing upstream into virtual environments compresses development timelines and reduces cost.

How are digital twins used in autonomous driving?

Companies like Waymo use digital twins of real-world driving environments, built from millions of miles of recorded sensor data, to validate software changes at scale. Waymo's simulation system runs roughly 20 million miles of virtual driving per day, enabling the team to test against rare edge cases that would take decades to encounter through on-road driving alone.

What is the difference between a digital twin and a 3D model?

A 3D model is a visual representation. A digital twin is a functional model that reflects the current state of the physical system and can simulate how that system responds to changes. The distinction is whether you can make a decision based on the model. If the model doesn't track the physical system's current state or can't simulate changes, it's a visualization, not a twin.

How do digital twins apply to procurement and supply chain?

Digital twins are increasingly being applied to model costs, bills of materials, and supplier networks. When an engineer changes a material specification, a supply chain digital twin can immediately show the cost, lead time, and supplier availability implications -- rather than waiting weeks for the sourcing team to manually re-quote. This approach compresses the iteration cycle for procurement decisions the same way crash simulations compress vehicle development.

The term "digital twin" has been in circulation long enough that it's started to mean everything and nothing. Vendors use it to describe anything from a 3D model of a building to a real-time dashboard with a fancy UI. Gartner predicts the market will hit $183 billion by 2031. But the most honest thing you can say about digital twins in 2026 is that most implementations don't work very well, and the ones that do share a specific set of characteristics that the ones that don't are missing.

I've spent the last decade working with digital twins at three very different companies -- Tesla, Waymo, and now LightSource -- and the gap between the implementations that changed how we operated and the ones that gathered dust was always the same gap. It wasn't a technology problem. It was a fidelity problem.

What a Digital Twin Actually Is

A digital twin is a software model of a real-world system that's accurate enough to make decisions against. That's it. The system might be a vehicle, a factory line, a driving environment, a supply chain, or a single machined part. The model might run in real time or in batch. It might be built from physics simulations, sensor data, or both.

The key word in the definition is "accurate enough." A 3D visualization that looks like the factory floor but doesn't reflect current cycle times is not a digital twin. A spreadsheet model that reflects current costs but can't simulate a change is not a digital twin. The threshold is: can you make a decision based on this model that you'd otherwise need a physical test to validate? If yes, you have a digital twin. If no, you have a rendering.

What Makes a Digital Twin Work

The implementations I've seen succeed -- and the ones I've seen fail -- differ on three axes.

Fidelity to the current state of the physical system. A digital twin that was accurate six months ago and hasn't been updated since is the most common failure mode in manufacturing. The physical system drifts -- new tooling, new suppliers, different material lots, updated software -- and the model doesn't follow. At Waymo, we solved this by continuously ingesting real-world sensor data. At Tesla, the crash simulation models were updated with every design revision. The twin has to track the thing it's twinning, or the gap between model and reality grows until the model is useless.

Iteration volume. The value of a digital twin is proportional to how many scenarios you can run through it. One simulation proves nothing. A thousand simulations start to reveal patterns. A million simulations, drawn from real-world distributions, give you statistical confidence. The organizations that get the most from digital twins are the ones that treat them as high-throughput testing environments, not as one-off visualization tools.

Closed-loop feedback. The best digital twins feed results back into the physical system. That same loop -- sense, decide, rebuild, repeat -- is becoming the decisive edge in modern war. A crash simulation that changes the structural design. A driving simulation that updates the autonomy software. A cost model that changes the sourcing decision. If the twin doesn't close the loop -- if insights sit in a report that nobody acts on -- the investment doesn't compound.

Three Real-World Examples

Tesla: The Factory as a Digital Twin

At Tesla, the factory was the product as much as the vehicle was. The layout of the Gigafactories, the flow of materials, and the programming of the robots were all modeled extensively before the physical factory was built. Teams ran thousands of crash simulations and iterated on structural designs before building a single physical prototype -- each simulation cost minutes of compute time, while each physical crash test cost hundreds of thousands of dollars and weeks of lead time.

During the Model 3 program, the pace of iteration on the General Assembly line, on battery pack design, on motor geometry was accelerated by the ability to test virtually before committing to tooling. When you're trying to hit 5,000 units per week on an aggressive timeline, you don't learn by crashing physical prototypes. You learn by running the model until you're confident, then you cut metal. That's what let the team compress the NPI cycle from years to months.

LightSource's CEO and co-founder Spencer Penn worked on parts of this program at Tesla.

Waymo: 20 Million Miles a Day

At Waymo, I led the simulation organization. The digital twin was a virtual replica of real-world driving environments, built from millions of miles of recorded sensor data. By 2019, Waymo had driven over 10 billion miles in simulation. The system ran roughly 20 million miles of simulated driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

Waymo's simulation system runs roughly 20 million miles of virtual driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

The use case was precise. If a vehicle failed to stop at a stop sign in a specific recorded scenario, we could replay that exact situation against updated software, then evaluate the fix across hundreds of thousands of similar situations to confirm that overall performance improved without introducing regressions. No fleet of physical vehicles could match that volume. No amount of on-road testing could reach the tail of edge cases that simulation covered routinely.

The lesson that applies beyond self-driving: the value of a digital twin scales with the diversity of scenarios you can run through it. Waymo didn't simulate 20 million miles a day because more miles sounded impressive. They did it because the long tail of rare driving scenarios is where the system fails, and you can only find those failures at volume.

Chinese Automotive OEMs: 65% Virtual Testing

According to McKinsey, Chinese automotive OEMs now conduct roughly 65% of their testing through simulation, compared to 40-50% at OEMs in other regions. Three-quarters of those tests are highly automated. McKinsey estimates that maximizing this virtual testing approach can cut in half the number of physical prototypes required.

Chinese automotive OEMs conduct roughly 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut physical prototypes required in half. -- McKinsey, 2025

This isn't just a cost story. It's a speed story. The Chinese OEMs that are compressing vehicle development timelines from five years to two are doing it in large part by shifting testing upstream into virtual environments. Physical prototyping is the bottleneck. The companies that reduce their dependence on it move faster.

Where This Is Going -- and Where LightSource Fits

The pattern across these examples is consistent: the companies that build high-fidelity digital twins and run them at volume get to iterate faster, fail cheaper, and ship sooner. The ones that treat digital twins as visualization projects or one-off analyses don't.

The most interesting frontier is extending digital twins beyond the product itself and into the supply chain. Today, most companies model the physical product in simulation but treat procurement, cost modeling, and supplier networks as separate, largely manual processes. The opportunity is to connect the product twin to the supply chain twin -- so that when an engineer changes a material spec or a geometry, the cost implications, supplier availability, and lead time impacts are visible immediately, not six weeks later when the sourcing team gets around to re-quoting.

This is where the kind of hardware companies that use LightSource -- challenger manufacturers running aggressive NPI timelines, trying to out-iterate larger incumbents -- are starting to apply digital twin thinking to their procurement process. They model BOMs, costs, and supply networks on the platform and run scenarios against real supplier data. What happens to landed cost if we shift this part from a casting to a stamping? What if we dual-source this component? What does the cost curve look like if tariffs on this country of origin increase by 15%?

The goal is the same one that worked at Tesla and Waymo: compress the iteration cycle, reduce the cost of learning, and make better decisions faster by testing virtually before committing physically. It applies to vehicle dynamics and autonomous driving. It also applies to whether your BOM is going to cost what you think it will.

Sources

Waymo's cars drive 10 million miles a day in a perilous virtual world -- MIT Technology Review on Waymo's simulation infrastructure and daily mileage

Waymo has now driven 10 billion autonomous miles in simulation -- TechCrunch reporting on Waymo's cumulative simulation milestone

Simulation City: Waymo's most advanced simulation system -- Waymo's blog on their simulation platform architecture

Automotive product development: Accelerating to new horizons -- McKinsey on Chinese OEMs conducting 65% of testing through simulation

Digital Twins in Manufacturing: Separating Hype from Reality -- WWT on common failure modes in digital twin implementations

Manufacturing Predicts 2026: Digital Twins, AI Agents, and Autonomous Operations -- Gartner on digital twin market reaching $183 billion by 2031

Frequently Asked Questions

What is a digital twin in manufacturing?

A digital twin is a software model of a physical system -- a factory, a vehicle, a supply chain -- that is accurate enough to test decisions against before making them in the real world. It can range from a physics-based simulation of crash performance to a cost model of a bill of materials, as long as the model reflects the current state of the physical system it represents.

Why do most digital twin implementations fail?

The most common failure mode is a model that was accurate when it was built but hasn't been updated as the physical system changed. Digital twins require continuous data ingestion to stay aligned with reality. Other failure modes include low iteration volume (using the twin for one-off visualizations instead of high-throughput testing) and lack of closed-loop feedback (insights from the model never making it back into the physical process).

How does virtual testing compare to physical prototyping in automotive?

McKinsey reports that leading Chinese automotive OEMs now conduct about 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut the number of physical prototypes required in half. Physical prototyping remains necessary for final validation, but shifting testing upstream into virtual environments compresses development timelines and reduces cost.

How are digital twins used in autonomous driving?

Companies like Waymo use digital twins of real-world driving environments, built from millions of miles of recorded sensor data, to validate software changes at scale. Waymo's simulation system runs roughly 20 million miles of virtual driving per day, enabling the team to test against rare edge cases that would take decades to encounter through on-road driving alone.

What is the difference between a digital twin and a 3D model?

A 3D model is a visual representation. A digital twin is a functional model that reflects the current state of the physical system and can simulate how that system responds to changes. The distinction is whether you can make a decision based on the model. If the model doesn't track the physical system's current state or can't simulate changes, it's a visualization, not a twin.

How do digital twins apply to procurement and supply chain?

Digital twins are increasingly being applied to model costs, bills of materials, and supplier networks. When an engineer changes a material specification, a supply chain digital twin can immediately show the cost, lead time, and supplier availability implications -- rather than waiting weeks for the sourcing team to manually re-quote. This approach compresses the iteration cycle for procurement decisions the same way crash simulations compress vehicle development.

The term "digital twin" has been in circulation long enough that it's started to mean everything and nothing. Vendors use it to describe anything from a 3D model of a building to a real-time dashboard with a fancy UI. Gartner predicts the market will hit $183 billion by 2031. But the most honest thing you can say about digital twins in 2026 is that most implementations don't work very well, and the ones that do share a specific set of characteristics that the ones that don't are missing.

I've spent the last decade working with digital twins at three very different companies -- Tesla, Waymo, and now LightSource -- and the gap between the implementations that changed how we operated and the ones that gathered dust was always the same gap. It wasn't a technology problem. It was a fidelity problem.

What a Digital Twin Actually Is

A digital twin is a software model of a real-world system that's accurate enough to make decisions against. That's it. The system might be a vehicle, a factory line, a driving environment, a supply chain, or a single machined part. The model might run in real time or in batch. It might be built from physics simulations, sensor data, or both.

The key word in the definition is "accurate enough." A 3D visualization that looks like the factory floor but doesn't reflect current cycle times is not a digital twin. A spreadsheet model that reflects current costs but can't simulate a change is not a digital twin. The threshold is: can you make a decision based on this model that you'd otherwise need a physical test to validate? If yes, you have a digital twin. If no, you have a rendering.

What Makes a Digital Twin Work

The implementations I've seen succeed -- and the ones I've seen fail -- differ on three axes.

Fidelity to the current state of the physical system. A digital twin that was accurate six months ago and hasn't been updated since is the most common failure mode in manufacturing. The physical system drifts -- new tooling, new suppliers, different material lots, updated software -- and the model doesn't follow. At Waymo, we solved this by continuously ingesting real-world sensor data. At Tesla, the crash simulation models were updated with every design revision. The twin has to track the thing it's twinning, or the gap between model and reality grows until the model is useless.

Iteration volume. The value of a digital twin is proportional to how many scenarios you can run through it. One simulation proves nothing. A thousand simulations start to reveal patterns. A million simulations, drawn from real-world distributions, give you statistical confidence. The organizations that get the most from digital twins are the ones that treat them as high-throughput testing environments, not as one-off visualization tools.

Closed-loop feedback. The best digital twins feed results back into the physical system. That same loop -- sense, decide, rebuild, repeat -- is becoming the decisive edge in modern war. A crash simulation that changes the structural design. A driving simulation that updates the autonomy software. A cost model that changes the sourcing decision. If the twin doesn't close the loop -- if insights sit in a report that nobody acts on -- the investment doesn't compound.

Three Real-World Examples

Tesla: The Factory as a Digital Twin

At Tesla, the factory was the product as much as the vehicle was. The layout of the Gigafactories, the flow of materials, and the programming of the robots were all modeled extensively before the physical factory was built. Teams ran thousands of crash simulations and iterated on structural designs before building a single physical prototype -- each simulation cost minutes of compute time, while each physical crash test cost hundreds of thousands of dollars and weeks of lead time.

During the Model 3 program, the pace of iteration on the General Assembly line, on battery pack design, on motor geometry was accelerated by the ability to test virtually before committing to tooling. When you're trying to hit 5,000 units per week on an aggressive timeline, you don't learn by crashing physical prototypes. You learn by running the model until you're confident, then you cut metal. That's what let the team compress the NPI cycle from years to months.

LightSource's CEO and co-founder Spencer Penn worked on parts of this program at Tesla.

Waymo: 20 Million Miles a Day

At Waymo, I led the simulation organization. The digital twin was a virtual replica of real-world driving environments, built from millions of miles of recorded sensor data. By 2019, Waymo had driven over 10 billion miles in simulation. The system ran roughly 20 million miles of simulated driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

Waymo's simulation system runs roughly 20 million miles of virtual driving per day -- the equivalent of more than 100 years of real-world driving every 24 hours.

The use case was precise. If a vehicle failed to stop at a stop sign in a specific recorded scenario, we could replay that exact situation against updated software, then evaluate the fix across hundreds of thousands of similar situations to confirm that overall performance improved without introducing regressions. No fleet of physical vehicles could match that volume. No amount of on-road testing could reach the tail of edge cases that simulation covered routinely.

The lesson that applies beyond self-driving: the value of a digital twin scales with the diversity of scenarios you can run through it. Waymo didn't simulate 20 million miles a day because more miles sounded impressive. They did it because the long tail of rare driving scenarios is where the system fails, and you can only find those failures at volume.

Chinese Automotive OEMs: 65% Virtual Testing

According to McKinsey, Chinese automotive OEMs now conduct roughly 65% of their testing through simulation, compared to 40-50% at OEMs in other regions. Three-quarters of those tests are highly automated. McKinsey estimates that maximizing this virtual testing approach can cut in half the number of physical prototypes required.

Chinese automotive OEMs conduct roughly 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut physical prototypes required in half. -- McKinsey, 2025

This isn't just a cost story. It's a speed story. The Chinese OEMs that are compressing vehicle development timelines from five years to two are doing it in large part by shifting testing upstream into virtual environments. Physical prototyping is the bottleneck. The companies that reduce their dependence on it move faster.

Where This Is Going -- and Where LightSource Fits

The pattern across these examples is consistent: the companies that build high-fidelity digital twins and run them at volume get to iterate faster, fail cheaper, and ship sooner. The ones that treat digital twins as visualization projects or one-off analyses don't.

The most interesting frontier is extending digital twins beyond the product itself and into the supply chain. Today, most companies model the physical product in simulation but treat procurement, cost modeling, and supplier networks as separate, largely manual processes. The opportunity is to connect the product twin to the supply chain twin -- so that when an engineer changes a material spec or a geometry, the cost implications, supplier availability, and lead time impacts are visible immediately, not six weeks later when the sourcing team gets around to re-quoting.

This is where the kind of hardware companies that use LightSource -- challenger manufacturers running aggressive NPI timelines, trying to out-iterate larger incumbents -- are starting to apply digital twin thinking to their procurement process. They model BOMs, costs, and supply networks on the platform and run scenarios against real supplier data. What happens to landed cost if we shift this part from a casting to a stamping? What if we dual-source this component? What does the cost curve look like if tariffs on this country of origin increase by 15%?

The goal is the same one that worked at Tesla and Waymo: compress the iteration cycle, reduce the cost of learning, and make better decisions faster by testing virtually before committing physically. It applies to vehicle dynamics and autonomous driving. It also applies to whether your BOM is going to cost what you think it will.

Sources

Waymo's cars drive 10 million miles a day in a perilous virtual world -- MIT Technology Review on Waymo's simulation infrastructure and daily mileage

Waymo has now driven 10 billion autonomous miles in simulation -- TechCrunch reporting on Waymo's cumulative simulation milestone

Simulation City: Waymo's most advanced simulation system -- Waymo's blog on their simulation platform architecture

Automotive product development: Accelerating to new horizons -- McKinsey on Chinese OEMs conducting 65% of testing through simulation

Digital Twins in Manufacturing: Separating Hype from Reality -- WWT on common failure modes in digital twin implementations

Manufacturing Predicts 2026: Digital Twins, AI Agents, and Autonomous Operations -- Gartner on digital twin market reaching $183 billion by 2031

Frequently Asked Questions

What is a digital twin in manufacturing?

A digital twin is a software model of a physical system -- a factory, a vehicle, a supply chain -- that is accurate enough to test decisions against before making them in the real world. It can range from a physics-based simulation of crash performance to a cost model of a bill of materials, as long as the model reflects the current state of the physical system it represents.

Why do most digital twin implementations fail?

The most common failure mode is a model that was accurate when it was built but hasn't been updated as the physical system changed. Digital twins require continuous data ingestion to stay aligned with reality. Other failure modes include low iteration volume (using the twin for one-off visualizations instead of high-throughput testing) and lack of closed-loop feedback (insights from the model never making it back into the physical process).

How does virtual testing compare to physical prototyping in automotive?

McKinsey reports that leading Chinese automotive OEMs now conduct about 65% of their testing through simulation, compared to 40-50% elsewhere. Maximizing virtual testing can cut the number of physical prototypes required in half. Physical prototyping remains necessary for final validation, but shifting testing upstream into virtual environments compresses development timelines and reduces cost.

How are digital twins used in autonomous driving?

Companies like Waymo use digital twins of real-world driving environments, built from millions of miles of recorded sensor data, to validate software changes at scale. Waymo's simulation system runs roughly 20 million miles of virtual driving per day, enabling the team to test against rare edge cases that would take decades to encounter through on-road driving alone.

What is the difference between a digital twin and a 3D model?

A 3D model is a visual representation. A digital twin is a functional model that reflects the current state of the physical system and can simulate how that system responds to changes. The distinction is whether you can make a decision based on the model. If the model doesn't track the physical system's current state or can't simulate changes, it's a visualization, not a twin.

How do digital twins apply to procurement and supply chain?

Digital twins are increasingly being applied to model costs, bills of materials, and supplier networks. When an engineer changes a material specification, a supply chain digital twin can immediately show the cost, lead time, and supplier availability implications -- rather than waiting weeks for the sourcing team to manually re-quote. This approach compresses the iteration cycle for procurement decisions the same way crash simulations compress vehicle development.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Faster sourcing. Lower cost. Less chaos.

Try out LightSource and you’ll never go back to Excel and email.

Trusted by:

Trusted by:

Trusted by:

*GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally, and COOL VENDORS is a registered trademark of Gartner, Inc. and/or its affiliates and are used herein with permission. All rights reserved. Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.